Michal Lipson's Team Wins $4.7M DARPA Grant to Revolutionize Augmented Reality Glasses

Imagine driving along the highway with directions and other pertinent information (think gas stations) appearing “on” your glasses so you don’t have to look away from the road. Or building a complicated DIY project with step-by-step instructions appearing “on” your glasses. Or being a first-responder unsure which way to go, receiving information from central headquarters “on” your glasses. Augmented reality (AR) glasses can already do all of this, but current models are very heavy, big and clunky, and use a lot of power. Most people, especially those in the field, cannot wear them comfortably for very long.

An interdisciplinary Columbia Engineering team is working with colleagues at Stanford, UMass Amherst, and Trex Enterprises Corporation to come up with an alternative solution. Thanks to a $4.7 million, four-year grant from DARPA, they are developing a revolutionary lightweight glass that is able to dynamically monitor the wearer’s vision and display contextual images that are vision-corrected.

“We are creating a game-changer, a completely novel glass design that enables high resolution projection and detection of light with no moving parts,” says Michal Lipson, Eugene Higgins Professor of Electrical Engineering at Columbia, a pioneer in nanophotonics who is leading the team. “Our design will be a key technology enabler for the Department of Defense, industry, and the general public. Our ultimate deliverable will be an ultrahigh-resolution, see-through, head-mounted display with a large field of view and vastly reduced SWaP (size, weight, and power consumption), coupled with the ability to correct users’ ocular aberrations in real time and project aberration-corrected visible contextual information onto the retina.”

Image Carousel with 3 slides

A carousel is a rotating set of images. Use the previous and next buttons to change the displayed slide

-

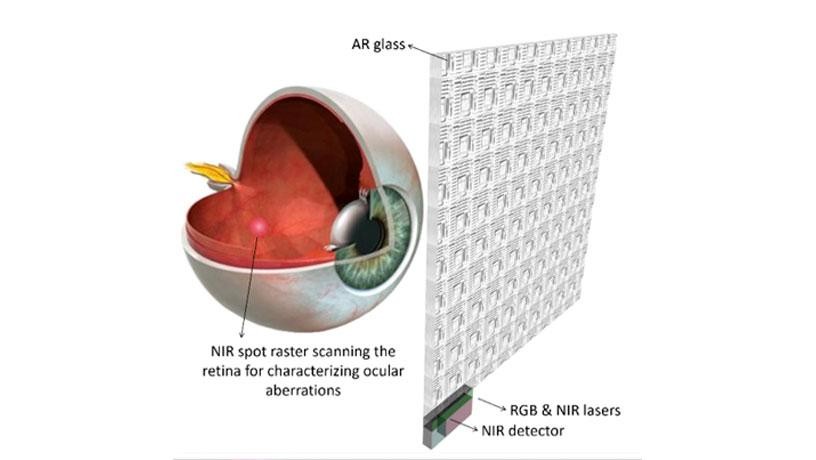

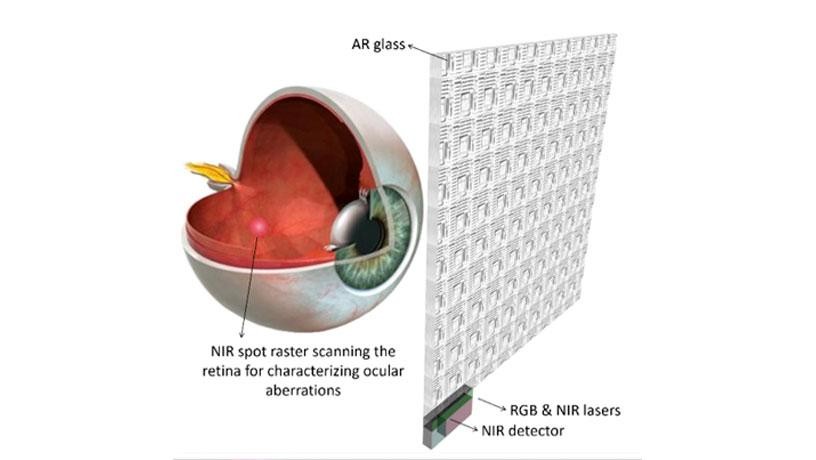

Slide 1: Proposed augmented reality (AR) glass based on Silicon Nitride integrated photonics. The AR glass consists of a 2D array of pixels, RGB and NIR lasers, a NIR detector, a NIR isolator, electronic circuits, and control software.

-

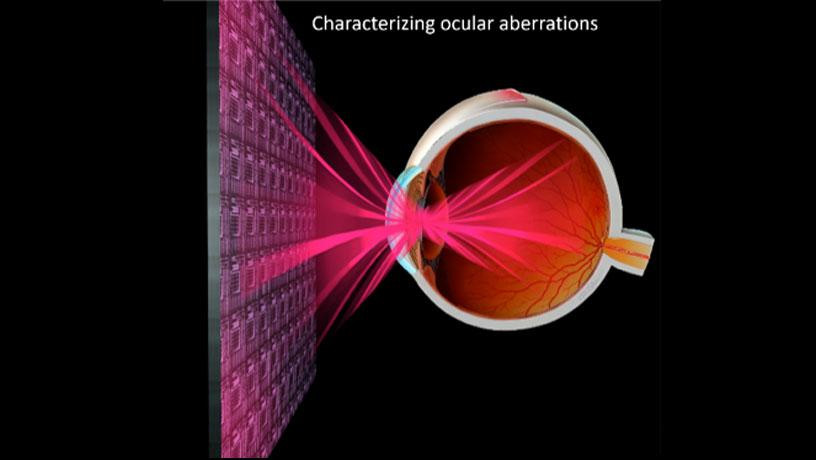

Slide 2: One function of the AR glass is to dynamically characterize ocular aberrations of the wearer by projecting NIR light into the eye and collecting reflected light from the retina.

-

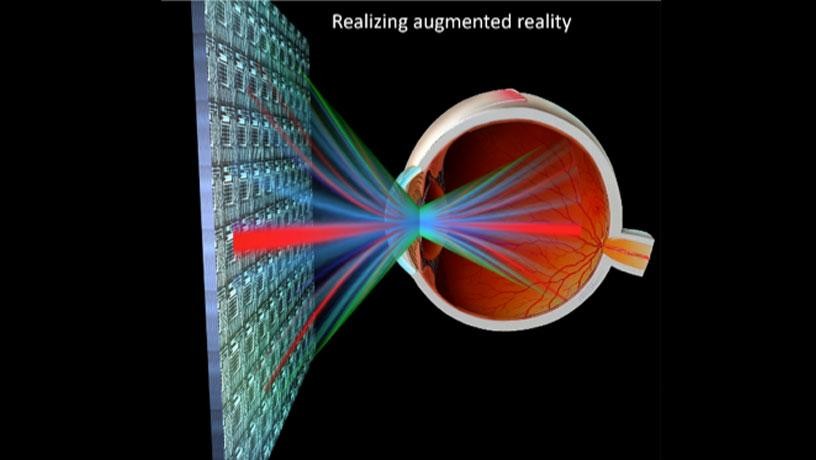

Slide 3: The other function is to project aberration-corrected RGB contextual images into the eye.

Proposed augmented reality (AR) glass based on Silicon Nitride integrated photonics. The AR glass consists of a 2D array of pixels, RGB and NIR lasers, a NIR detector, a NIR isolator, electronic circuits, and control software.

One function of the AR glass is to dynamically characterize ocular aberrations of the wearer by projecting NIR light into the eye and collecting reflected light from the retina.

The other function is to project aberration-corrected RGB contextual images into the eye.

The technology leverages recent work by the Columbia Engineering team, including James Hone (mechanical engineering), Nanfang Yu (applied physics), and Dimitri Basov (physics, Arts & Sciences), who have developed novel engineered optical materials (EnMats) that include new phase-transition correlated oxides and 2D excitonic transition metal dichalcogenides (TMDs). These EnMats provide extremely high electro-optic response and have very low losses in the visible (VIS) and near-infrared (NIR). Silicon nitride (SiN) integrated photonics will serve as the backbone onto which these EnMats will be incorporated to realize the AR glass.

The project also draws on other Columbia Engineering strengths, including work by Lipson and Alex Gaeta (applied physics), who have demonstrated high technological capabilities of SiN photonics for the visible (VIS) and near-infrared (NIR) spectral ranges, by Nanfang Yu, who works on flat optics, or “metasurfaces,” that are able to mold optical wavefronts into arbitrary shapes, and by Harish Krishnaswamy (electrical engineering) and Lipson, who lead the field in device and circuit design and large-scale nanofabrication to demonstrate phased arrays in the VIS and NIR.

The proposed AR glass relies on the ultrafast generation of arbitrary wavefronts, both in VIS and NIR. Fast arbitrary wavefront generation in these spectral ranges has been one of the major challenges in optics due mainly to a lack of actively tunable optical materials. Commercially available spatial light modulators based on liquid crystal cells and MEMS mirror arrays do not solve the problem because they are limited in modulation speed and spatial resolution.

“We are proposing an ultra-compact platform for arbitrary wavefront generation with high speed that is based on tunable metasurfaces in the VIS and NIR,” says Yu, assistant professor of applied physics. “The metasurfaces are based on two critical innovations we’ve made at Columbia Engineering: our EnMats with highly tunable complex optical refractive indices and optical resonators that further enhance the electro-optic effect of the EnMats.”

The AR glass’s 2D array of pixels are based on VIS and NIR electrically tunable SiN resonators coated with thin-film EnMats. Each pixel includes RGB (red-green-blue light for projecting the image) and NIR (for characterizing ocular aberrations) optical phased arrays. The AR glass also contains one optical isolator to distinguish between NIR light projected into the eye and NIR light reflected from the retina. The isolator enables simultaneous light projection and detection in the NIR.

“We will couple laser light into a bus waveguide, distribute it over a network of branch waveguides covering the entire surface of the AR glass, evanescently couple it into the SiN resonators, and then scatter it into the eye,” Lipson notes.

The team plans to develop a scalable fabrication process that is based on standard CMOS techniques, such as Deep UV lithography, and well-established procedures, such as dry transfer methods, to integrate the EnMats into the SiN integrated photonics platform. They will also design analytical and computational tools for modeling large resonator arrays and dynamics of device performance.

“The multifunctionality of our nanostructured AR glass is enabled by extreme capabilities that cannot be achieved using traditional optical elements,” says Lipson. “Our system incorporates the capabilities of wavefront sensing and correction for lower and higher order ocular aberrations in real time, capabilities that no other display technology provides and that have been shown to be critical for clear or even ‘supernormal’ vision of images.”

Make way for the Terminator!

—by Holly Evarts

Original article can be found here.

LINKS

http://www.ee.columbia.edu/michal-lipson

https://physics.columbia.edu/content/dmitri-n-basov

http://me.columbia.edu/james-hone

http://apam.columbia.edu/nanfang-yu

http://apam.columbia.edu/alexander-l-gaeta

https://www.ee.columbia.edu/content/harish-krishnaswamy