Prof. Nima Mesgarani Wins $2M NIH Grant to Study How People Hear

Nima Mesgarani, assistant professor in the department of electrical engineering, has won a $2 million, five-year Research Project Grant from the National Institute of Deafness and Other Communication Disorders (NIDCD-NIH) for research on how the brain analyzes speech. He is working with Sameer Sheth (assistant professor, Neurosurgery), Guy McKhann (associate professor, Neurosurgery) and Catherine Schevon (assistant professor, Neurology) from Columbia University Medical Center to develop an innovative approach that records from electrodes that have been surgically implanted in the cortex of epilepsy patients as part of their clinical evaluation, and provides a highly detailed view of neural activity.

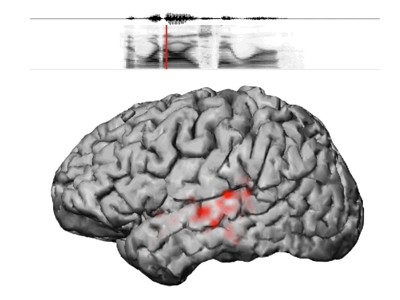

“This NIH grant will be a great catalyst in helping push our research forward,” says Mesgarani. “The cortical mechanisms and transformations involved in speech perception are still surprisingly poorly understood, mainly because the high spatiotemporal resolution required for studying speech is beyond what noninvasive neuroimaging techniques offer. We expect our collaborative approach, which uses both invasive and noninvasive methods, to improve our understanding of how people hear.”

While most individuals can follow a single speaker even in crowded, noisy environments, those with peripheral and central auditory pathway disorders can find this an extremely challenging task. And for researchers, the lack of a detailed neurobiological model of cortical functions underlying robust speech perception has hindered their understanding of how these processes become impaired in various communication disorders such as aphasia, language learning delay, and dyslexia. Mesgarani and his team hope to comprehensively define the nature of the cortical representation of speech and the role of various brain regions in creating a stable perception.

“We already know, from neuroimaging literature, that several brain regions have a specialized role in speech comprehension,” Mesgarani notes. “What we want to determine is just how these areas interact to robustly represent the speech and speaker features, and how expectation and attention are integrated into this representation.”

This research takes a significant step toward understanding how the brain internally represents and follows the voices of individual speakers, and will establish a link between neural response characteristics and their dynamic properties. The Columbia team’s innovation lies in their use of invasive recording methods for human neurophysiology, their novel speech engineering and machine learning methods developed to decode neural responses, and their unique cognitive experiments designed to tease apart the cortical mechanisms behind complex cognitive abilities.

“Our project will greatly impact the current models of cortical speech processing, which is of great interest in many disciplines including neurolinguistics, speech engineering, and speech prostheses,” Mesgarani adds.

Original article here.

Related Stories: