Updating the Brain

A new wave of devices could help researchers reverse Alzheimer’s, restore sight, and avert catastrophically bad decision-making, in the process revealing fresh insights into how the brain works. But what else might they do?

Back when Nima Mesgarani was a graduate student in electrical engineering, he was in the lab cleaning up audio recordings for a project when his thoughts turned to the mysteries of the brain. His team had been recording data from the auditory cortex of animals. Mesgarani, who had been thinking about how he could apply his engineering skills to helping the hearing and speech impaired, saw how the animals were capable of filtering out background noise, a feat that hearing aids, the only scientific solution available, had never been able to replicate—they can amplify or dampen sounds in certain frequencies, but they can’t mindread. But those lab results planted a seed in his own mind. Maybe here in the auditory cortex’s electrical signals could lie the data needed to develop an entirely new approach.

For decades, scientists had known that humans produce distinct, specific brain signals associated with speech, but for decades, that’s about as far as the science went. Mesgarani became focused on the idea that as an engineer, he could leverage this insight from neuroscience to build a system that could directly reconnect a person’s thoughts with their ability to hear and speak once the body’s own system failed.

Megarani kept at it, eventually, as a faculty member at Columbia Engineering, assembling his own team to open the Neural Acoustic Processing Lab. There, he teamed up with a clinician at Northwell Health, Ashesh Dinesh Mehta, who was working with people who’ve had electrode arrays implanted to monitor epileptic seizures. Based on recordings of the patients’ neural patterns, they trained a speech-processing algorithm Mesgarani had created. To their delight, it worked: their system reconstructed from brain waves the words spoken aloud to these patients. Mesgarani called his tool the vocoder, and when he released his findings in 2019, it marked the first time that anyone had developed a system capable of directly translating human thought into intelligible, recognizable speech.

Mesgarani’s tool belongs to a class of wearable devices (either inside or outside the skull) that are designed to help people perceive, think, communicate, and act. Ever since Elon Musk announced he was getting into the business of rewiring the human mind four years ago, these devices, known as brain-computer interfaces (BCIs), have seen a massive rush of funding. Much of it flows into the private sector, where companies like Musk’s Neuralink posit a future filled with consumer electronics that post to social media with the flick of a thought or jack our smartphones right into skulls.

But for nearly a century, BCIs of another kind have been a dream of researchers, who have sought to develop tools that restore sight, hearing, and mobility to people who’ve suffered catastrophic accidents or debilitating diseases. That progress has been slow, largely because the brain’s biology wasn’t well understood and the technology to study it wasn’t up to the task.

Increasingly, both are evolving in tandem. BCIs live at the intersection where neuroscience becomes neuroengineering, and as researchers are developing new tools to decode the brain’s extraordinary data processing, engineers are not only leveraging those insights to create neural prosthetics, they’re also advancing our basic understanding of our own minds—and raising profound questions about the nature of the self

Cognitive Orthotics

Six professors are tackling different areas of the brain. Click to see which sections each professor is exploring, and to what effect.

SELECTIVE ATTENTION

Most of the people who need a hearing aid won’t use one,” says Mesgarani, “because they’re pretty useless when you need them”—for instance, at loud parties, where across-the-board amplification almost defeats the purpose. So what if we could use the brain to help technology help the brain listen? Mesgarani wondered. Returning to his vocoder, an idea occurred to him: “What happens when you have more than just one talker?” They applied their algorithm to that problem, and “what we found was, surprisingly, the only speech that is being reconstructed from the brain is the talker that you’re paying attention to,” he says. In other words, they discovered that when we actively listen to another person, our own auditory center falls into sync with our interlocutor, in an act of mental mirroring connecting the two parties.

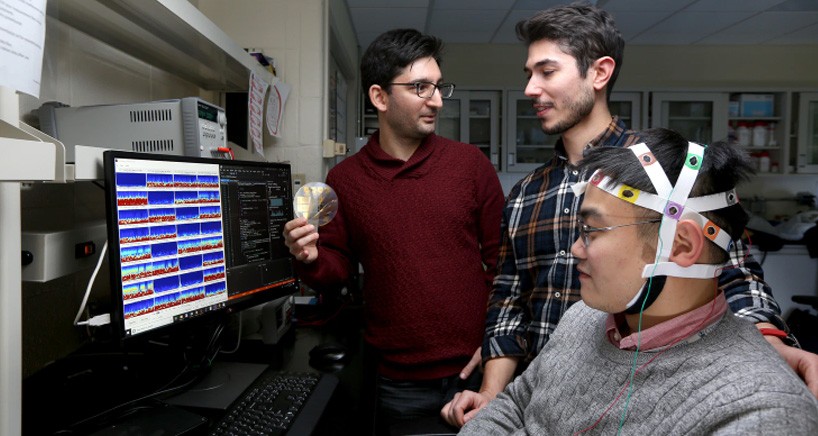

Based on those neurological findings, Mesgarani has since updated his system so that it can amplify only the voice a brain wants to hear (see graphic), a result they hope to soon translate to naturalistic situations beyond controlled lab conditions. His group is also developing better electrodes and finer algorithms to support noninvasive devices, ones that might sit atop the scalp to record neural signals.

“I always think of this in terms of our embodied self, and extensions of ourselves,” says Paul Sajda, a professor of biomedical engineering, radiology (physics), and electrical engineering at Columbia. “The cell phone has become so integral, some people really do believe it’s part of them. I mean, if they lose their cell phone, they’re hysterical. It’s a cognitive orthotic.”

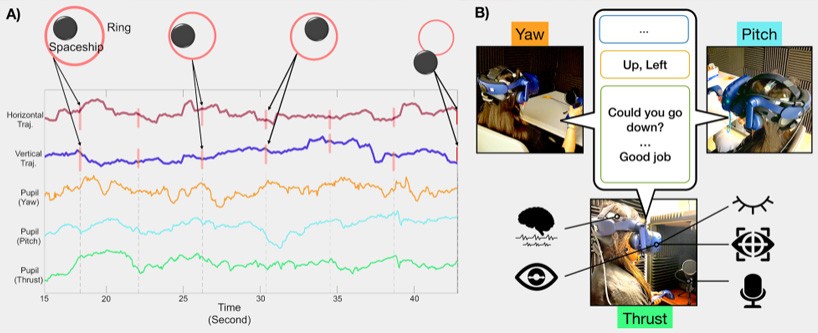

Sajda’s team has studied pilots’ brain dynamics in a virtual-reality flight simulator. Using indicators of activity in three brain areas, they can predict pilot failure 15 seconds ahead of time.

Sajda’s work focuses on how arousal, or responsiveness to stimuli, influences decision-making. He uses BCIs to improve people’s rapid decision-making—the kind that occurs in a second or two. Consider pilots trying to land a plane on an aircraft carrier. They must make quick adjustments to avoid either stalling or hitting the deck too hard. Sometimes they repeatedly overcorrect, leading to a phenomenon called pilot-induced oscillation (PIO): the nose wildly fluctuates up and down. PIO is what brought down the two Boeing 737 Max airliners in 2018 and 2019. It’s difficult for pilots to avoid PIO; once they veer too far in one direction, the danger increases arousal, and they automatically jerk in the other direction. When they go too far the other way, it increases arousal even more, perpetuating a dangerous downward spiral.

Sajda’s team, working with DARPA, the U.S. military’s moonshot division, has studied pilots’ brain dynamics while such errors occur in a virtual-reality flight simulator. Using indicators of activity in three brain areas (see graphic), they can predict pilot failure 15 seconds ahead of time.

One way to use the data to avoid overcorrection is to dampen the flight stick’s effect on the plane. “We had one case where we had a marine aviator doing this task,” Sajda says, “but the marine broke the stick. He really thought he could get out of it. So he just really shoved it a lot.” So instead of using the BCI to adjust the plane, they’re now using it to adjust the pilot. They provide audio feedback that supposedly tracks the pilot’s heartbeat but in fact beeps much slower, only 40 or 50 times a minute. This calms the pilot. And when the pilot is about to get into trouble, they turn up the volume. Using this technique, they can increase simulated flight time by 75%.

As is often the case, tech designed to tackle extreme examples can lay the groundwork for later widespread implementation. For his part, Sajda says he may one day turn his tool on himself: a similar system could routinely predict when he—or any of us—will make a dangerous mistake while driving.

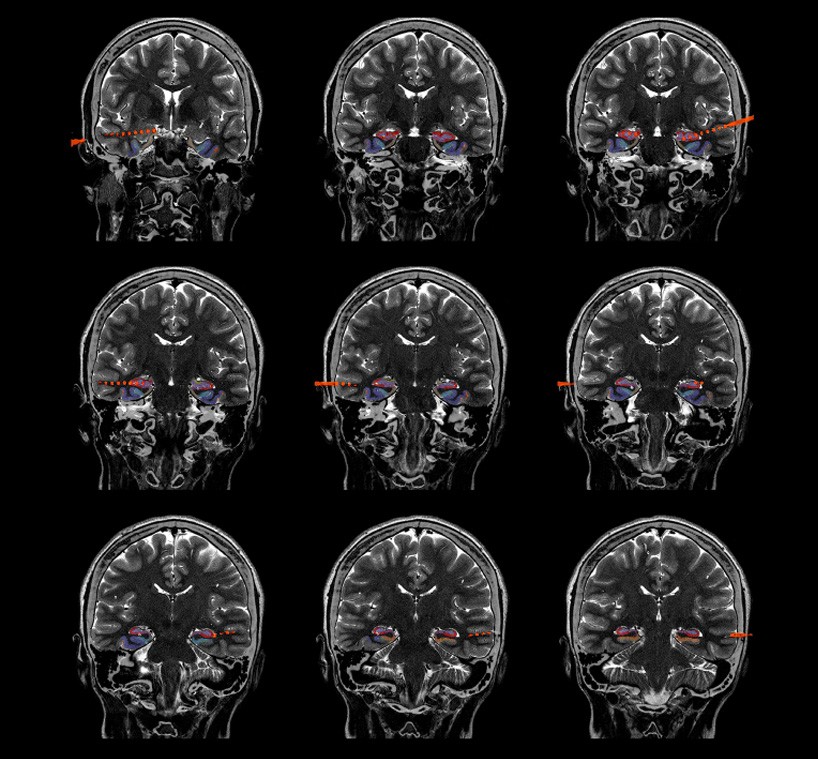

But when it comes to how our brains process arousal, overstimulation is just one side of the coin. In another project, Sajda aims to reduce depression by amplifying the effect of a treatment called transcranial magnetic stimulation (TMS), an option often tried once standard treatments have failed. In TMS, doctors place a pulsing electromagnet against the skull to alter the activity of neurons on and just below the surface of the brain. But they’d also like to target a deeper structure called the anterior cingulate cortex (ACC) because it shows reduced activity in some people with depression, one of the most common and misunderstood diseases. Pre-pandemic, nearly 20 million U.S. adults reported experiencing at least one major depressive episode, and those numbers have only increased since the onset of COVID-19. And the stakes are high: in an alarming subset of cases, severe depression has proven resistant to standard treatments. Sajda and his collaborators are now in clinical trials to test whether a new method they’ve developed to stimulate the ACC (see graphic) can make a dent in the disease.

Soldiers have been found to treat their robotic dogs like real animals; we may come to treat BCIs that learn a lot about us and assist us as close partners.

For people in the throes of a life-altering illness, the choice to take on a novel or invasive treatment can be a no-brainer, but for those in less dire circumstances, the calculus shifts as the trade-offs become less easy to ignore. For instance, a BCI might be hacked to read or control your neural activity. That’s not anything to lose sleep over yet —the mind reading BCIs can do today is still too coarse to cause concern, Sajda notes. “But of course the technology is just going to get better.”

The enemy doesn’t have to come from without, though. Already, the relationships we build with technology can work on us in unexpected ways. Soldiers have been found to treat their robotic dogs like real animals; we may come to treat BCIs that learn a lot about us and assist us as close partners. From there, we may come to find that we’ve begun to treat BCIs not only as partners but as parts of ourselves. Will that change our ideas about ourselves or what it means to be human? “We might become more entangled the more closely we’re integrated with these systems,” Sajda says.

MAKING MEMORIES

In many ways, our memories define us; when they begin to erode, as in the case of Alzheimer’s disease, we can feel like we’re losing our very sense of self. Joshua Jacobs, an associate professor of biomedical engineering, studies the formation of memory with an eye toward preserving it. Like Mesgarani, in one of his experiments Jacobs takes advantage of electrodes doctors have implanted on the brains of people with severe epilepsy to monitor seizures. Jacob’s subjects play a video game he devised called Treasure Hunt in which they see the location of valuable items in a field while their brain is stimulated at either 3 or 10 hertz (see graphic) and then must remember the locations of each item. “The ultimate vision is to have an implanted memory prosthetic,” Jacobs says. People might control it with a smartphone when they need to store an important memory. His team hopes to have findings soon, with the experiments revealing when, where, and how brain stimulation should be applied to most effectively improve memory encoding.

Users will need to be the ones to decide when they’d want to use such BCIs. “Enhancing memory is a tricky thing,” Jacobs says. “Maybe there’s a reason the brain doesn’t remember everything perfectly well. If I remembered every commute to work perfectly and then something very interesting happens, maybe I have a hard time pulling out that one very special event.” Still, it’s an unprecedented responsibility. What if you had at your fingertips a knob that allowed you to turn up a memory prosthetic for certain experiences you really want to encode?

Even something as straightforward as aiding hearing is not so simple. “There is a sweet spot,” Mesgarani says. “You want to amplify your target but you also don’t want to eliminate everybody else, because then you won’t be able to switch attention.” And you don’t want to be caught off-guard by an approaching car.

CHIPS ON THE TABLE

BCIs will advance only if not just better algorithms but also better hardware becomes available. The main reason BCIs have been so hard to realize is the difficulty of interfacing between such a delicate biological system and an entirely artificial one. And then there’s the fact that nature outclasses the technology at nearly every turn.

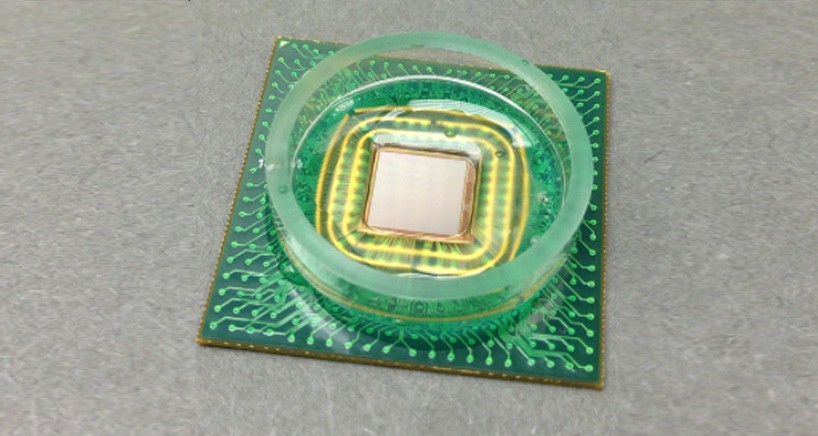

Some brain implants have only a few dozen electrodes, but DARPA’s Neural Engineering System Design program is funding researchers to build devices with a million of them. Ken Shepard, a professor of biomedical engineering, has received $15.8 million from the program. His current design has 65,000 electrodes. What’s more, it’s much smaller than anything previously like it. It’s a single chip that sits on the surface of the brain and communicates wirelessly with another device strapped to the outside of the head, which relays information to a computer. The chip also receives power wirelessly from the strapped-on relay station. The implant doesn’t need a large radio or a battery.

“If you’re talking about augmentation of function”—not just helping unhealthy people but improving the functioning of healthy people—“a surgical implantation will never be justified, at least not in my lifetime.”

Shepard’s team uses traditional integrated-circuit technology. TSMC, a large microchip manufacturer, builds the chip to Shepard’s specifications, and then MIT’s Lincoln Laboratory post-processes it, removing excess material and adding electrodes made of titanium nitride. The result is a flexible chip that’s 1.2 centimeters per side and only 15 microns (thousandths of a centimeter) thick. “That is the most volume-efficient implant you could possibly have,” Shepard says. It’s a million times smaller per electrode than the state-of-the-art Intellis device, made by Medtronic. Shepard says his team can get to a million electrodes by making the chip wider, adding more radios, and having it do onboard data processing to compress the data it sends.

One early application may be helping blind people see again by transmitting information from a camera to the visual cortex (see graphic). Many people flinch at the idea of a chip in their head, but one of Shepard’s medical collaborators tells him, “If you don’t have your sight, you’ll undergo anything for the promise to get your sight back.”

With any BCI, there will be a trade-off between efficacy and safety. “Any device you put in the brain is going to have risk,” Jacobs says. “There’s always a risk of infection and other things happening. These are even under the best of circumstances.” According to Shepard, “my personal feeling is that if you’re talking about augmentation of function”—not just helping unhealthy people but improving the functioning of healthy people—“a surgical implantation will never be justified, at least not in my lifetime.”

Implants have long held back the entire BCI field. Dion Khodagholy, an assistant professor of electrical engineering, has developed an approach for countering three major stumbling blocks. Traditional devices use metal electrodes and silicon circuitry, but they face several problems. First, the brain is wet, and water damages electronics. Second, bodies see metal and silicon as intruders and mount immune defenses against them. Third, the brain communicates using ions—charged atoms—while electronics usually use electrons, and metal electrodes don’t efficiently translate signals between the two.

Khodagholy’s solution: implants whose transistors—the building blocks of computational circuitry—and electrodes are both made of special soft and biocompatible polymers. In these polymers, ions can penetrate the material, changing its ability to conduct electrons. His group has also developed fabrication processes to integrate these polymers with flexible electronics. The result is a conformable sheet that can be placed on the brain to record or stimulate the underlying cells. The size of the device can be varied from micrometers to centimeters in diameter and contain up to hundreds of electrodes.

Beyond the materials science and electrical engineering, Khodagholy works with Jennifer N. Gelinas, an assistant professor of neurology at Columbia, using the devices to study systems neuroscience—how networks of neurons communicate to process information. They’ve found, for instance, new biomarkers for memory storage in the brains of rats. “This is a really active, exciting area in our lab,” Khodagholy says.

And now they’re translating what they’re learning into potential medical applications. They’ve placed the implants on the cortices of humans during surgery to test the devices’ safety and ability to record signals. Their eventual goals include looking for ways to improve ability to localize and remove epileptic tissue and even to devise therapies to treat memory dysfunction. Gelinas, a pediatric neurologist, is enthusiastic about the potential for soft, miniaturized devices to be of value for children with refractory neurologic disorders, as they could reduce side effects associated with typical clinical devices. However, extensive testing is necessary before such devices could be extended to this vulnerable population.

BETTER THAN WELL

One can imagine people using memory enhancers, as Jacobs’s and Khodagholy’s technology might do, not only to treat Alzheimer’s but also to augment healthy memory. But augmentation comes in many flavors. “In vision, we have corrective eyeglasses,” Mesgarani says, “but we also have binoculars.” Any technology or medical treatment raises the issue of fairness: some people will have access and others won’t. That’s especially true for augmentation. We’ll have to decide when people are allowed to use certain devices “in the same way that steroids in sports has become a real challenge,” Jacobs says. “I don’t see why it wouldn’t be the same for academic pursuits and brain stimulation devices.”

Qi Wang, an associate professor of biomedical engineering, engages with the question of augmentation directly. Like Jacobs and Khodagholy, he counts memory among his key research interests. And like Sajda, he sees the arousal system as a lynchpin. He wants to understand and influence it so as to impact the brain’s ability to focus and process information quickly. “After you drink coffee, you probably feel more alert, more productive, right?” he says. “People use chemicals to boost capacity, but of course our brains are able to do it seamlessly”—without external chemicals. He recalls a student from Wisconsin who enjoys hunting. “He always says while you’re sitting in the woods, you hear a branch snap and you can hear and see much clearer. We’re trying to use neurotechnology to release this capacity.”

Wang has started a spinoff company developing patches worn on the neck to stimulate the vagus nerve, which in turn stimulates the locus coeruleus, an area of the brain stem that manages arousal (see graphic). He and his colleagues have just received approval from the University’s research ethics board to see if the patches help people discriminate between two different audio tones. After perception they’ll test higher-level cognitive tasks involving memory or inhibition. “We joke that eventually the company’s biggest competitor might be Starbucks,” he says with a laugh.

We’re limited by bulky hardware, slow software, high cost, and great risk. But all of these problems will slowly shrink.

One advantage of caffeine, Wang says, is that you can drink it discreetly if you don’t want people to know you’re boosting arousal, whereas a BCI might be harder to hide. Still, Wang sees BCIs as less problematic than, say, genetic engineering. “We use our technology to release some capacity we already have,” he says. “It’s not like a designer baby. It’s like physical therapy or physical training.” The technology will only make us work more efficiently or help us navigate, he says. “When you drive, Google Maps just makes your life much, much easier.”

Today, we’re limited by bulky hardware, slow software, high cost, and great risk. But all of these problems will slowly shrink.

For those in need, that future may be right at hand. Wang notes that a hundred years ago, the idea of a pacemaker—electronic hardware implanted in the chest—would have seemed crazy. “If we’re talking about in 30 years, I think everybody will have some noninvasive neural interfaces,” he says. “And in 50 years, potentially everybody who needs an implant can have a viable option.”