The paper, “Complete Dictionary Recovery over the Sphere” asks, how can we concisely represent data, such as digital audios, images, genome sequences? This is the central question to address in data compression, and becomes increasingly important to data acquisition, processing, and analysis. The existing technology has entailed extensive manual design efforts and difficult mathematical analysis. An attractive replacement is to learn compact representation directly from the data of interest, and has seen numerous empirical successes. The learning approach is particularly relevant when the volume and variety of data grows tremendously, as today. This paper is among the first efforts to obtain solid understanding of the learning approach.

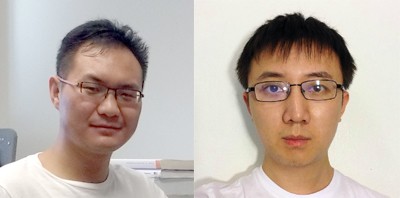

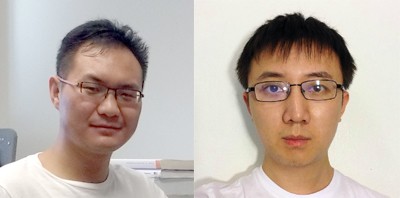

In early July two Electrical Engineering doctoral candidates, Ju Sun and Qing Qu, took home the Best Student Paper Award at the Signal Processing with Adaptive Sparse Structured Representations (SPARS) 2015 conference. Both students are currently advised by Assistant Professor John Wright.

Ju Sun earned his B. Eng. degree in computer engineering (with a minor in Mathematics) from the National University of Singapore in 2008. He has been pursuing PhD degree at Columbia since 2011. His research interests lie at the intersection of computer vision, machine learning, numerical optimization, signal/image processing, information theory, and compressive sensing, focused on modeling, harnessing, and computing with low-dimensional structures in massive data, with provable guarantees and practical algorithms. Recently, he is particularly interested in explaining the surprisingly effectiveness of nonconvex optimization heuristics arising in various applications.

Prior to enrolling at Columbia University, Qing Qu received a B.Eng. in Electronic Engineering from Tsinghua University, Beijing, in Jul. 2011, and a M.Sc. in Electrical Engineering from the Johns Hopkins University, Baltimore, in Dec. 2012. He interned at U.S. Army Research Laboratory from Jun. 2012 to Aug. 2013. His current research focuses on developing practical algorithms and provable guarantees for many nonconvex problems in signal processing and machine learning with low intrinsic data structure.