When Trust is a Factor

When Dean Shih-Fu Chang, a professor of Electrical Engineering, started working to make information systems more useful three decades ago, some of the most vexing problems required developing search algorithms that could find a “needle” in a digital hay stack.

Today’s state-of-the-art is far more advanced.

In his latest work with former Columbia Electrical Engineering postdoc Dr. Chris Thomas, Chang is creating systems that extract and integrate information from many different types of media — including text, images, videos, and audio — to develop a sophisticated understanding of concepts and how they relate to each other.

Now, as a tidal wave of content produced by generative AI tools like ChatGPT and DALL·E crashes onto the internet, those systems have an increasingly vital use case: automatic fact-checking.

How do users check whether the claims made in a document are consistent with knowledge present in other trusted sources? Can researchers build efficient tools to help users check facts while they are making decisions?

Those are the questions Chang and Thomas seek to answer.

“It’s less about search and access,” says Chang, dean and the Morris A. and Alma Schapiro Professor at Columbia Engineering. “In the AI age — the digital manipulation age — the most important challenge is determining the trustworthiness and integrity of information.”

ON THE CUTTING EDGE OF KNOWLEDGE GRAPH TECHNOLOGY

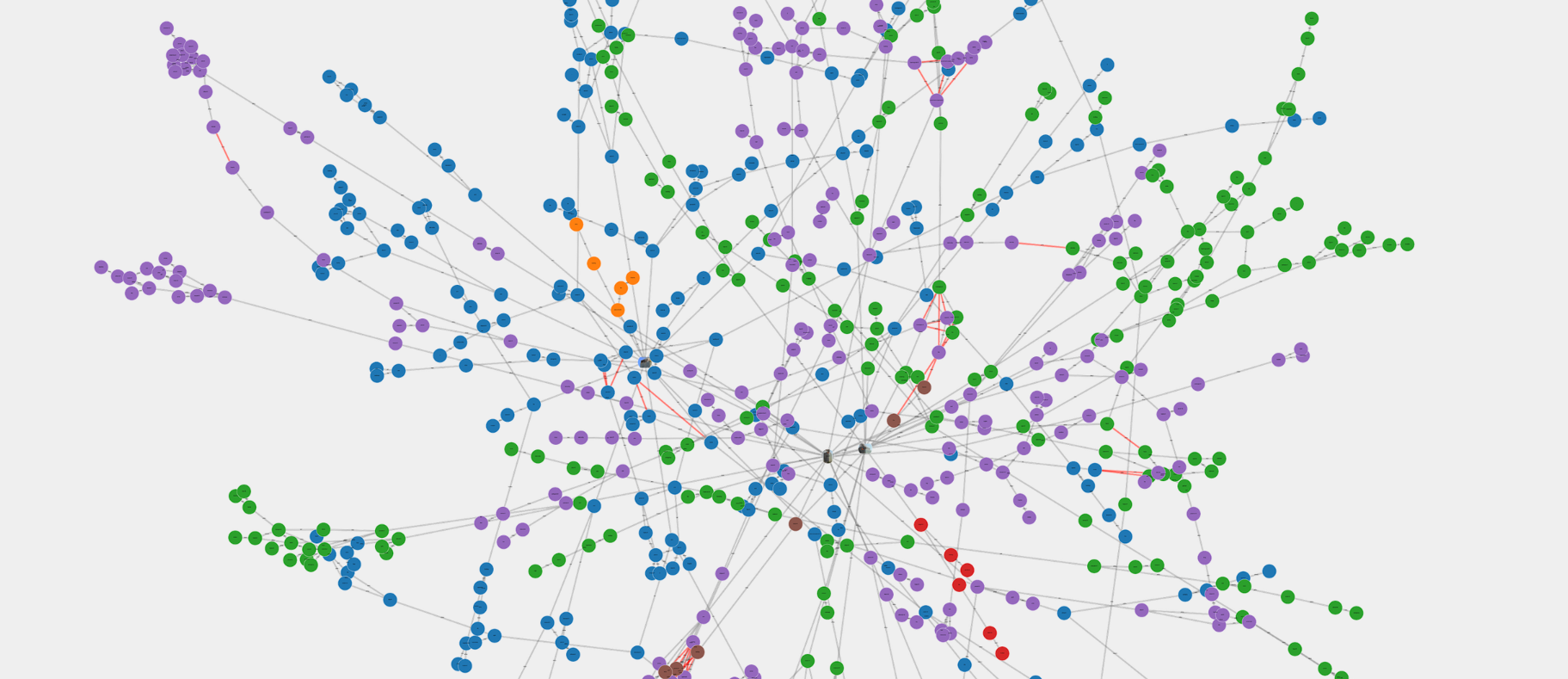

The programs Thomas and Chang have developed are built on top of vast collections of interrelated concepts stored as what computer scientists call knowledge graphs. These databases contain information about two things: entities and the relationships among those entities. For example, the entities “Barack Obama” and “United States” could be connected by the relationship “former president.” In natural language, that information could be expressed in a simple sentence: Barack Obama is a former president of the United States.” In computer vision, it could be manifested as a photo of the president performing official duties.

By including information from many different forms of media, Thomas and Chang’s work in building automatic fact-checking systems goes a step beyond most existing knowledge graph technology.

While text tends to include factual information about events and people, images and videos typically contain details about setting, mood, and sentiment.

When a user wants to look into a document or social media post, the new system extracts the concepts from that target document to build a knowledge graph, which contains specific claims involving certain entities, events, and relations reported in the target document — whether it’s text, video, audio, or an image.

Their system then automatically retrieves a set of relevant text, images, and video from trusted sources, archives, media outlets, or the authorities. Information is extracted from those inputs and configured into a background knowledge graph with a granular understanding of the topics described by the reference material.

Once the researchers build that background knowledge graph from trusted source material, they can verify each specific claim in the target document by comparing the claim knowledge graph against the background knowledge graph (and the associated multimedia content). This multimodal machine learning model uses deep learning and transformer models similar to those used in the state-of-the-art large language models. Unlike other tools, the system Thomas and Chang have developed incorporates the fine-grained structured information captured in the knowledge graphs.

“Combining different forms of media is extremely useful because it allows you to exploit different aspects of information in the different modalities,” Chang says. Text tends to include factual information about events and people; images and videos typically contain details about setting, mood, and sentiment.

For example, text articles alone wouldn’t be useful in fact-checking a claim like, "The organizer spoke to a mostly empty room and there were few claps when he announced his policy.”

“News articles may have facts about the policy that was announced,” says Thomas, now an assistant professor of computer science at Virginia Tech. “But you can only determine if the claim is true by looking at images, watching video, or listening to audio.”

If the claim turned out to be false, the latest version of the system could identify the segment of video that shows people were, in fact, clapping. This ability to explain what sources the claim does or doesn’t contradict is important for fact-checking and crucial for introducing AI-enabled solutions into applications that determine the trustworthiness of information.

Dean Shih-Fu Chang (left) and Chris Thomas, an assistant professor in the Department of Computer Science at Virginia Tech.

KEEPING WATCH FOR MACHINE-GENERATED CONTENT

In addition to detecting and analyzing false claims written by humans, these systems are well suited to detecting machine-generated content because the output from ChatGPT and similar programs is riddled with false information and inconsistent reasoning.

“These are subtle inaccuracies that most people wouldn't know but which we can use to look for inconsistencies,” Thomas says. “It’s a signal that the system is ‘hallucinating’ facts,” which is common behavior for a generative AI model.

The system’s multimodal capabilities help it sniff out machine-generated articles based on another clue: mismatching between the text of an article and the image used to illustrate it. For example, a bad actor who used a generative AI model to create thousands of fake articles about an event at the White House could easily scrape pictures off the internet to accompany each article.

“This automatic process of retrieving an image for the fake article can introduce cross-modal inconsistencies,” Thomas says. If the article talks about the President giving a speech outside in February, the system would raise a flag if the image showed people in summer clothes.

In their most recent presentation, Thomas and Chang explain an even more sophisticated method for comparing a claim to information in an image. The approach “goes beyond direct conflicts in the knowledge graph and uses reasoning to detect contradictions that aren’t obvious,” Thomas says. Rather than simply indicate whether or not all claims in the article are consistent with the knowledge graph, the latest system can explain which claims are contradictory.

HELPING HUMANS MAKE BETTER USE OF INFORMATION

Chang says his goal in developing these systems is to allow readers to seamlessly check information while they read, similarly to the way many browsers make it easy to highlight a word to find its definition. They will be especially useful when a reader doesn’t have the time to check sources by hand or the background knowledge to recognize when a caption misidentifies something in an image or video.

For example, since most people lack the technical expertise to know one piece of battlefield equipment from another, a misleading article could support its narrative by using a photo taken out of context and writing a deliberately incorrect caption. Most readers would never know, but the multi-modal, multi-source verification systems that Chang and Thomas work on would very likely pick up on the inconsistencies.

“The system helps readers understand the provenance of the information,” Chang says. When a reader encounters a claim, they can efficiently double-check the trustworthiness of the sources and see what else has been written about the topic. If the author misunderstood a source or made something up (or if a generative AI bot fabricated the whole thing), the systems Chang, Thomas, and their collaborators are building may help users know.

“In the end, these systems allow the human reader to be the final decision maker,” Chang says.