Active Query Sensing for Mobile Location Search

Summary

While much exciting progress is being made in mobile

visual search, one important question has been left

unexplored in all current systems. When the first query

fails to find the right target (up to 50% likelihood),

how should the user form his/her search strategy in the

subsequent interaction? We propose a novel Active Query

Sensing system to suggest the best way for sensing the

surrounding scenes while forming the second query for

location search. This work may open up an exciting new

direction for developing interactive mobile media

applications through innovative exploitation of active

sensing and query formulation.

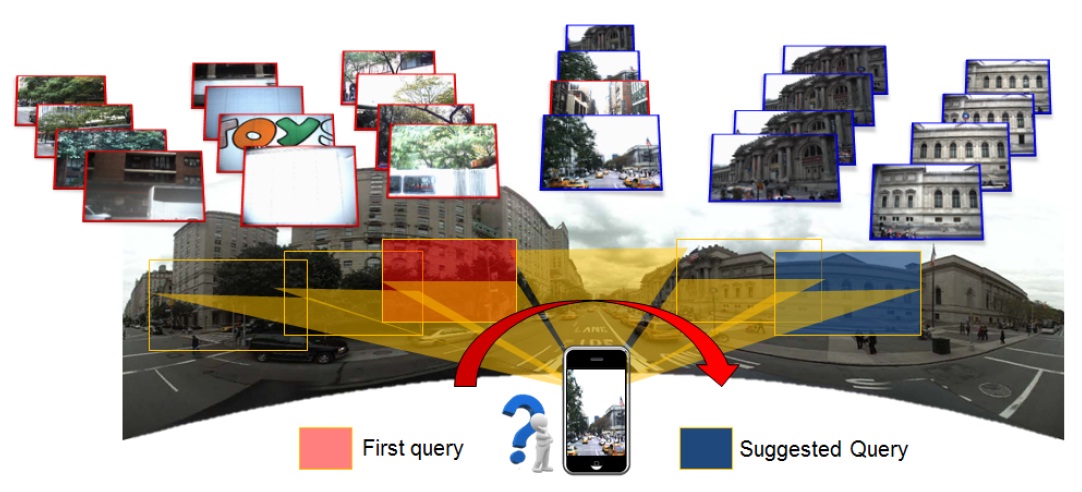

Our proposed framework is general. It can be extended

and applied to mobile visual search of targets of

multi-view references, such as the example shown below.

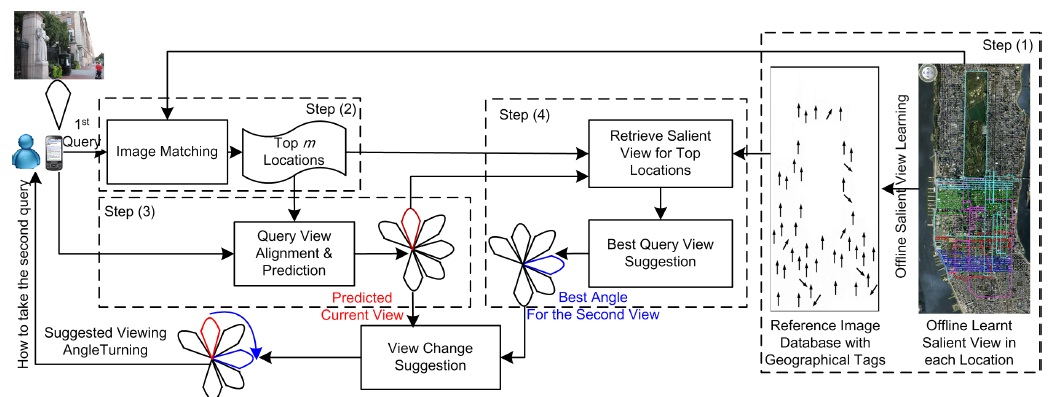

System Architecture

The basic idea of AQS is to use the first query as

"probe" to narrow down the solution space. And the

subsequent query views should be chosen in order to

maximally reduce the location search uncertainty. We

accomplish the goal by developing several unique

components – an offline process for analyzing the

saliency of the views associated with each geographical

location based on score distribution modeling,

predicting the visual search precision of individual

views and locations, an online process for estimating

the view of an unseen query, and suggesting the best

subsequent view change.

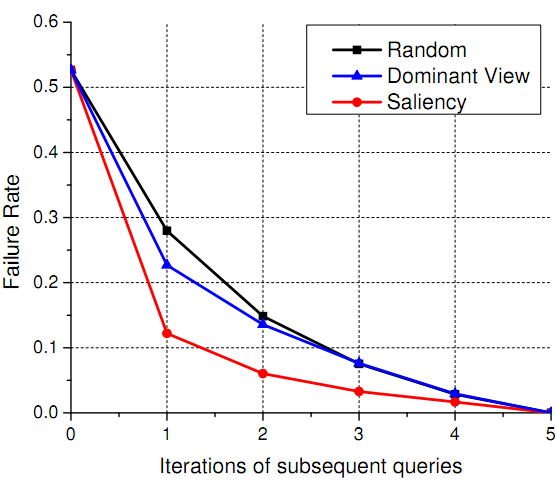

Performance Evaluation

The figure below shows failure rates over successive

query iterations based on different active query sensing

strategies. Using a scalable visual search system

implemented over a NYC street view data set (0.3 million

images),

we show the proposed method (the curve with label

"Saliency") can achieve a performance gain as high as

two folds, reducing the failure rate of mobile location

search from 28% to only 12% after the second query.

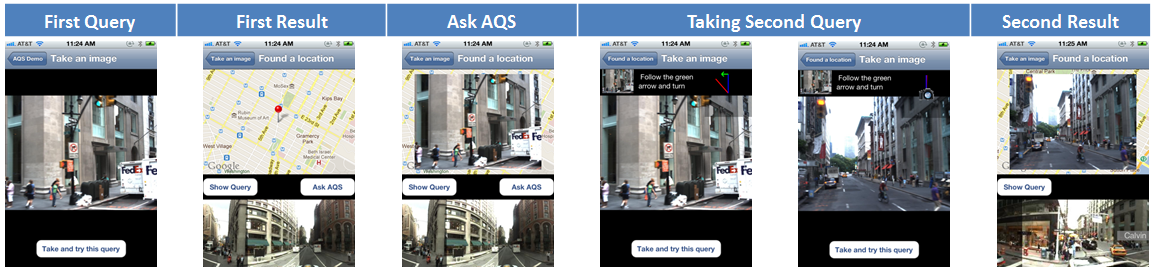

User Interface

We have implemented an end-to-end complete prototype for the proposed AQS system, including an iPhone mobile client, communication modules, and the image matching and retrieval server. The interfaces allow users to visualize search results by combining both panorama image and map. We have also designed an intuitive mechanism to show the user the best view angle and required camera rotation when taking the next query. Snapshots of the mobile user interfaces are shown below.

Acknowledgement

We would like to thank NAVTEQ for providing the NYC image data set, Dr. Xin Chen and Dr. Jeff Bach for their generous help.

People

Felix X. Yu, Rongrong Ji, Tongtao Zhang, Shih-Fu ChangPublications

-

Felix X. Yu, Rongrong Ji, Shih-Fu Chang. Active

Query Sensing for mobile location search.

In Proceeding

of ACM International Conference on Multimedia (ACM MM),

2011.

[Slides] [Poster]

[Slides] [Poster] -

Felix X. Yu, Rongrong Ji, Tongtao Zhang, Shih-Fu Chang. A

mobile location search system with Active Query Sensing.

In Proceeding

of ACM International Conference on Multimedia (ACM MM),

2011.

[Demo Video] [Poster]

[Demo Video] [Poster]