|

Visual IslandsIntuitive Browsing of Visual Search Results |

Background

Visual Islands is a complete framework that reorganizes a set of results into an intuitive display that emphasizes dominant underlying semantics. In this page, a few of key points of Visual Islands have been collected, but we encourage you to view the video examples, the snapping process, Visual Islands publications, or releated documents for a more in-depth exploration.

Motivation

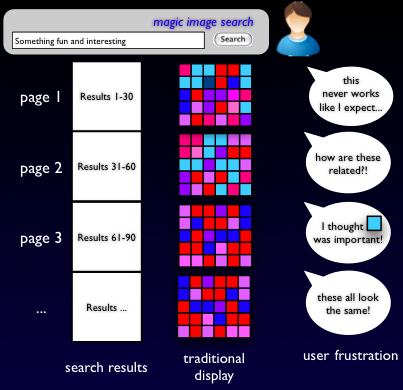

What inspired Visual Islands? Modern multimedia information retrieval systems return thousands of candidate results, often providing a high recall while sacrificing precision but visually inspecting many lines and pages of irrelevant results actually wastes a user's time. This burden is exacerbated when the search interface is small with very little usable landscape, as in mobile devices.

Coherence and Diversity

Humans make relevance judgements more quickly on items that are related. However, discovering a relationship that is ideal for this judgement process is not trivial. The most simple approach is to identify images that are exact- or near-duplicates of one another. While this method is robust for a very similar set of images, it is not suitable for a highly diverse collection because it will not identify and match the contents of two images if they share non-salient but similar backgrounds and if there are no duplicates in the entire collection of images, no reliable image relationships can be established. Another common approach in image visualization is to arrange images by their clustered low-level features. Global coherence in visual features is maximized therein, but when a query topic itself is not easy to qualitatively represent using low-level features (i.e. Find shots of a tall building (with more than 5 floors above the ground)), this method may fail. To better handle a very large collection of diverse images, hierarchical clustering system (or self-organizing map) which ensures that both coherence (via clustering) and diversity (via a multi-level hierarchy) but even for modern search systems, the computational requirements of this algorithm are still too great.

Intuitive Display

Another problem in inspecting image results is human understanding of what the search engine was trying to judge as relevant; not even state-of-the-art query processing algorithms can directly map human query input to effective automatic searches. Instead of addressing the problem at query input, an alternative approach is adopted to assist the user in understanding image search results and more generally, sets of unordered images. While this approach is a suitable complement to low-level clustering, the resulting display may not resemble the features derived from the reduced dimensionality due to distortions incurred from the incremental movement process, especially in regions of the reduced space that are very dense with samples.

Engaged and Guided Browsing

Finally, planning for future usage, search paradigms must battle against several other struggles like loss of relevance to a user's query, linear result navigation, and embracing the next generation of small, mobile displays. To fully utilize a user's inspection ability, a system must be en- gaging and guided by user preferences. Traditional page-based navigation is time-consuming and can be boring. The process of flipping to a new page of deeper results is visually disruptive and takes time to navigate. Also, in small display environments, the physical display space is small and precious. Methods must be adapted to best utilize this area while balancing the goals of helping users and conveying the most information.

Solutions

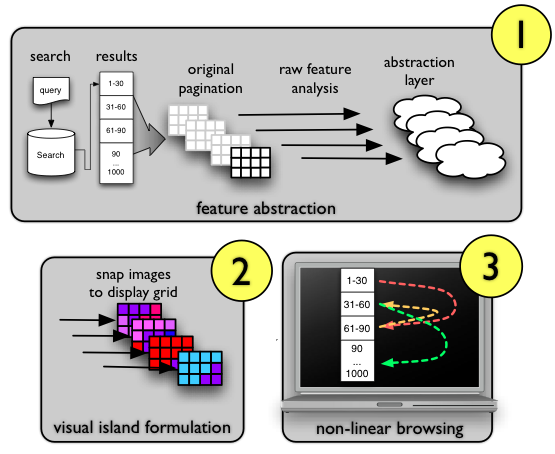

island formation and non-linear browsing.

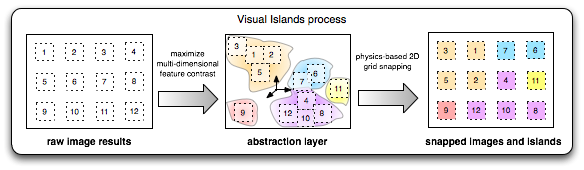

Our solution to these problems is a new paradigm called Visual Islands. With Visual Islands, users can more quickly inspect a large set of images because the have been spatially located next to images with similar visual content. This strategic placement encourages visual result coherence and is more intuitive than an unorganized display because users can perceive a gradual transition of dominant to non-dominant concepts. The three steps necessary to formulate Visual Islands are described briefly below.

Feature Extraction

Computing the distance between a set of images is not trivial. There are numerous forms of raw features like meta-data (time, GPS location, user-based tags, etc.), low-level features (color, texture, etc.), and mid-level semantics, that can be used for distance measurement. To maintain a flexible and robust system design, feature input is not limited to any one type. The only requirement is that the raw-features can be numerically represented in a score vector for each image in consideration. For this work, we utilize a large set of mid-level semantic classifiers for our features, which allows the Visual Islands formulation to describe images with easy-to-understand visual concepts like 'outdoor', 'smoke', and 'person' instead of low-level color or edge features, whose differences in value may be imperceptible to users.

Abstraction Layer

An abstraction layer is a new subspace that maps the raw feature space into a generic two-dimensional space. The mapping is constrained to a 2D representation because image results will ultimately be organized in a fixed-size display space. Display optimization is performed for only two dimensions of visualization, so these two dimensions should be of high utility. For example, if all images in a result set were green then a color feature would be a poor dominant feature because there is no contrast between different result images. However, if half of the images were mostly green (pictures of grassy fields) and half of the images were orange (pictures of sunsets) then a color feature would very cleanly partition these images and it is said to be dominant.

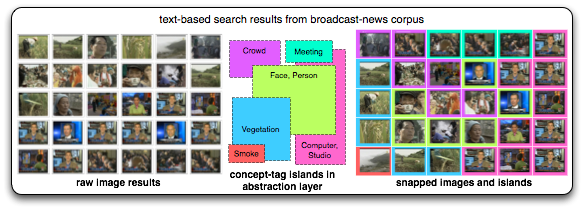

Grid-snapped Results

After the computation of an abstraction layer, images must be snapped to a page of fixed-positions on a 2D grid. This process can be complicated because it must preserve the proximity of similar images both globally (i.e. pictures of people versus pictures of animals) and locally (i.e. pictures of the same person). For this work, two different snapping techniques (corner snapping and string snapping) were developed and evaluated. In both methods, image positions are ordered for snapping based on the decreasing distance to the center of a the grid. We define the center of the grid is at half of the vertical height and horizontal width of the page. During processing, corner snapping assigns the closest image to the current cell iteratively. String snapping also assigns closest images, but at each assignment all other image positions are updated such that each pair-wise distance is minimally distorted. To better understand this process, excerpts from the narrated presentation are included for the corner algorithm [Flash SWF, 0.6 M] and the string algorithm [Flash SWF, 1 M]

Unique Applications for Visual Islands

Visual Islands optimally organizes image results for fast, intuitive inspection. Looking more closely at how these islands are formulated, we can find other unique applications for Visual Islands that are not easily achieved by traditional image display systems.

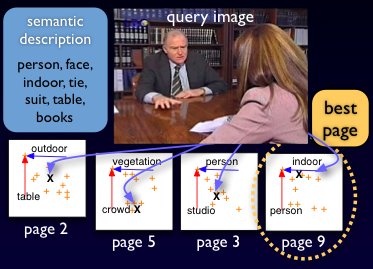

Non-linear Browsing

Non-linear browsing allows users to quickly jump to a section of results that is very similar to an image of high relevance. Traditionally, this process is referred to as relevance-feedback and may involve another iteration of image retrieval and scoring. Avoiding this potentially expensive operation, Visual Islands leverages pre-computed result lists and strategically places the user on another page of results that was highly correlated to his or her selected image. In the figure on the right, the query image (or the image the user is interested in) has a set of detected semantics. Through the Visual Islands process, pairs of these semantics were computed to be dominant dimensions in subsequent result pages. To evaluate the fitness of a page, we compute the distance to the page origin (the top-left corner) from user-selected image. By selecting the page with the shortest distance, Visual Islands can guide the user to a page with very similar dominant features and ideally more results that are highly similar to the user-selected image.

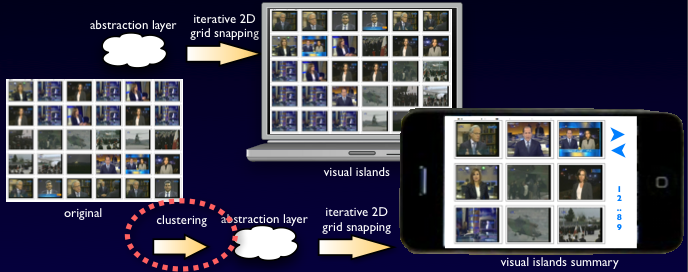

Small Display Environments

Small display environments are becoming increasingly popular as the bandwidth for and proliferation of mobile devices continues to grow. Currently, few image retrieval systems have adqeuate solutions for small screen systems. Here, the user is not only limited by the total screen size available but also the pixel count on that screen size. Visual Islands allows both constraints to be solved gracefully by displaying the most important image results for a single page. Here, importance is determined by clustering among low-level features before the images are projected into the abstract layer. The size of the display is always known so the number of clusters is chosen to match the total number of output positions on the new display. The filtering of images at the feature level, instead of the abstraction level, guarantees that rare, but important image results will not be lost. Although this technique was not formally evaluated in this work, the seamless application of Visual Islands is a powerful first-pass for the removal of redundant and non-significant images from small screen displays.

Evaluation

Visual Islands was evaluated for speed and performance increases measured while finding relevant images for a query topic. Keyframes extracted from the TRECVID2005 video dataset were utilized in this experiment because they had full annotations for a large set of semantic concepts. For more information about these concepts or the dataset, please reference related publications below. Through timed evaluations of 8 unique users and 18 unique topics, it was found that Visual Islands improves both user precision and recall of the search task and reduced the time that users needed to find relevant results. In all experiments, to encourage an accurate annotation, unbiased by user familiarity with a topic, the system allows users to skip a topic if he or she feels that the topic is too vague or unfamiliar.

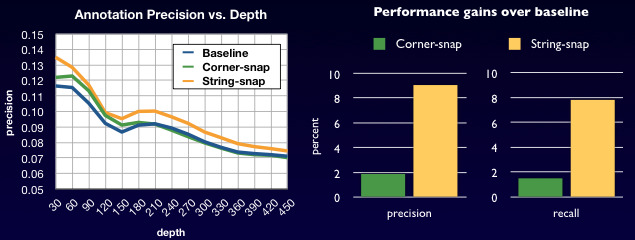

Performance

Visual Islands was created to offer a more coherent, intuitive, and engaging browsing experience. Performance gains can be measured by analyzing the precision and recall of users when judging image relevance to a specific query topic. To guarantee a consistent benchmark, a set of result images for each topic was automatically generated before the experiment and different users were asked to judge relevance on these closed sets. The chart on the right demonstrates that at all depths, image organization derived from Visual Island string snapping yielded better precision across all users. Additionally, the string snapping method achieved a relative improvement of 8% over corner snapping alone. The implications of these two observations are that Visual Islands consistently keeps users focused on truly relevant images (increased precision) and helps to avoid confusion by spatially separating non-relevant images (increased recall).

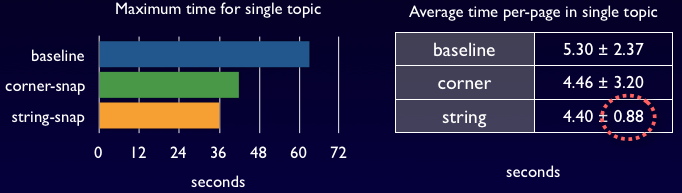

Speed

Another goal of Visual Islands was to reduce the burden of cognition on users by emphasizing the coherency and diversity of images in a single page; the ideal organization places similar images nearer and dissimilar images further away. We quantify the cognitive effect on users directly by measuring the time required for a user to find the first, second, third, etc. relevant image in a single page. As indicated by the graph on the right, the string-snapping method introduced by Visual Islands decreases the average time required to find relevant images over all users. This time decrease is observed both for consecutive relevant images (finding the first, second, third, etc. relevant image on a page) as well as the cumulative number of relevant images for a single topic. A second significant observation is that the time decrease was consistent (low statistical variance) across the entire user pool, which consisted of both novice and expert users of image retrieval systems.

Examples

As a visual approach, the best way to understand how Visual Islands work is to see an example. In this section two videos have been created. The first video provides a narrated presentation of the motivation, framework, and an experiment. The second video provides several visual examples of before (raw results) and after (organized results from Visual Islands) examples using both TRECVID and flickr image data on some example queries.

Visual Islands Presentation

This video is a narrated presentation of the Visual Islands work. This presentation was given at CIVR 2008. The runtime of this presentation is about 22 minutes.

- High quality Quicktime MOV [109 M]

- Web quality (lower frame rate + high compression) Flash SWF [46.4 M]

Example Transformations

This video presents several raw image result sets and their display configuration after Visual Islands processing. Examples were collected from TRECVID and flickr using several textual query topics. The runtime of this presentation is about 2 minutes.

- High quality Quicktime MOV [14.2 M]

- Web quality (lower frame rate + high compression) Flash SWF [3.4 M]

People

Eric Zavesky and Shih-Fu Chang first discussed alternative visual presentation strategies on the ride home from a conference. Through late 2007, Cheng-Chih Yang assisted Eric in realizing one of these strategies in an intuitive and useful form. We are still seeing the best usage for Visual Islands and are eager to discuss new ways that it can be used.

Publications

E. Zavesky, S.-F. Chang, C.-C. Yang. "Visual Islands: Intuitive Browsing of Visual Search Results." In ACM International Conference on Image and Video Retrieval, Niagra Falls, Canada, July 2008. [ PDF ] Best Oral Paper Award

A. Yanagawa, S.-F. Chang, L. Kennedy, W. Hsu, Columbia University's Baseline detectors for 374 LSCOM Semantic Visual Concepts. Columbia University ADVENT Technical Report #222-2006-8, March 20, 2007. [PDF]

Related Projects

Columbia374: Columbia University's Baseline Detectors for 374 LSCOM Semantic Visual Concepts

Columbia University at TRECVID 2006: Semantic Visual Concept Detection and Video Search

CuVid Video Search Engine 2005: Columbia News Video Search Engine and TRECVID 2005 Evaluation

Image Near-Duplicate Detection by Part-based Learning: Detecting Image Near-Duplicate for Linking Multimedia Content

Automatic Feature Discovery in Video Story Segmentation: Automatic Feature Discovery in Video Story Segmentation

For problems or questions regarding this web site contact the web master.

Last updated: