![]()

Mining Audio-Visual Events

in Multi-Sensor Surveillance Systems

Summary

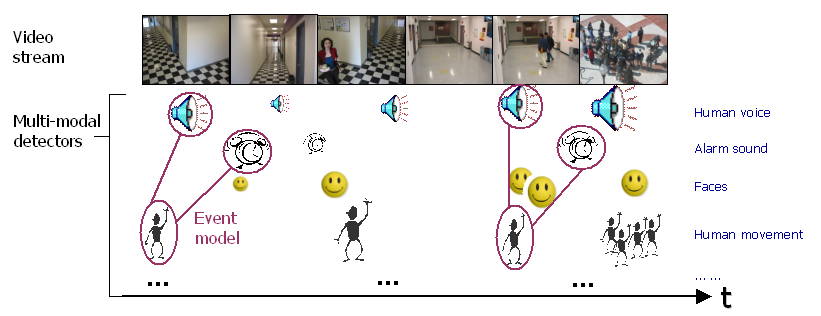

In this project, we are interested in the problem of learning audio-visual events from low-level detector results. Low level detectors may include generic face, movement, speech, and other audio-visual events. The challenge is to learn the statistical properties of sparse but repetitive events from unlabelled audio-visual streams. Further issues include incorporating a small amount of labels, multi-sensor integration, model invariance over temporal scales and so on.

We have obtained a collection of videos recorded from different public locations in two buildings. The cameras are fixed without pan-tilt-zoom control, simulating the typical surveillance environment. We are in the process of applying various low-level object detectors and developing adequate spatio-temporal mining methods.

In a related project on video pattern mining, we have developed statistical model based on Hierarchical HMM and methods for feature selection and model structure adaptation. The techniques have been applied to mine patterns in sports video and have resulted in promising results.

People

Links

![]()

DVMM Project on Video Pattern Mining

![]()

For problems or questions

regarding this web site contact The

Web Master.

Last updated: September 27, 2003.