Enhancing and Assessing Photo Quality: Compositional Perspective

Abstract

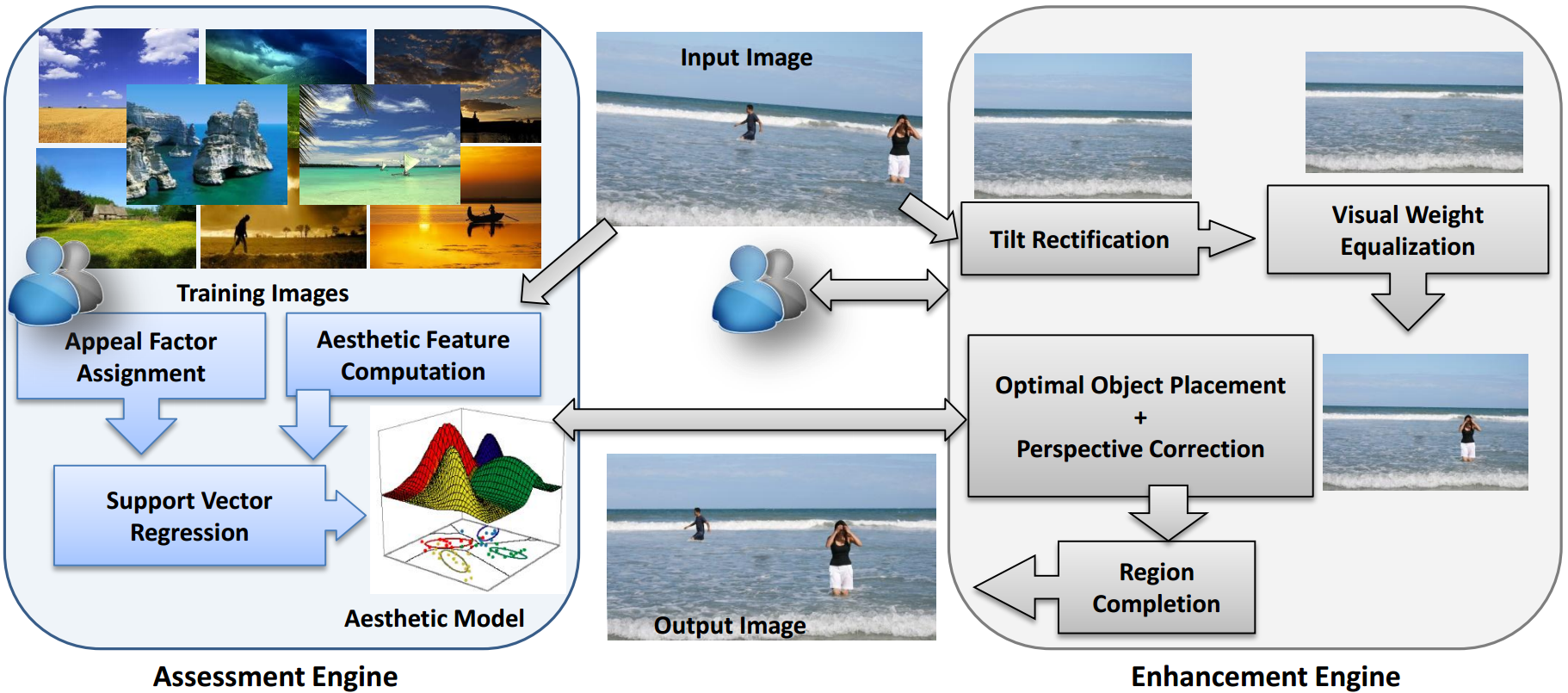

This article presents an interactive application that enables users to improve

the visual aesthetics of their digital photographs using several novel spatial

recompositing techniques. This work differs from earlier efforts in two

important aspects: (1) it focuses on both photo quality assessment and

improvement in an integrated fashion, (2) it enables the user to make informed

decisions about improving the composition of a photograph. The tool facilitates

interactive selection of one or more than one foreground objects present in a

given composition, and the system presents recommendations for where it can be

relocated in a manner that optimizes a learned aesthetic metric while obeying

semantic constraints. For photographic compositions that lack a distinct

foreground object, the tool provides the user with crop or expansion

recommendations that improve the aesthetic appeal by equalizing the distribution

of visual weights between semantically different regions. The recomposition

techniques presented in the article emphasize learning support vector regression

models that capture visual aesthetics from user data and seek to optimize this

metric iteratively to increase the image appeal. The tool demonstrates promising

aesthetic assessment and enhancement results on variety of images and provides

insightful directions towards future research.

Method Summary and Results

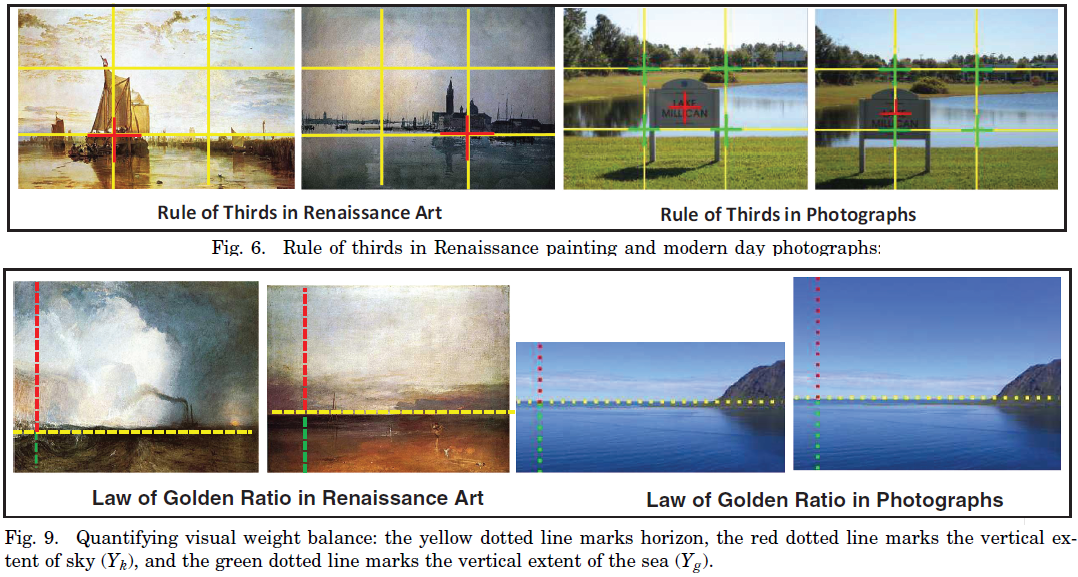

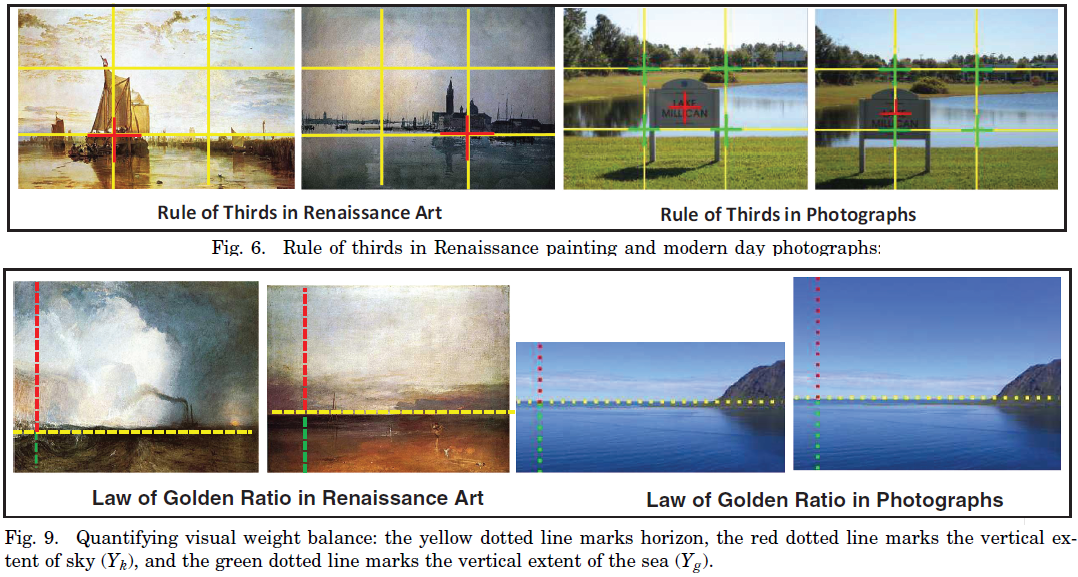

We formulate photo quality evaluation as a machine learning problem in which we

map the characteristics of a human-rated photograph in terms of its underlying

adherence to the rules of composition. A part of our method can be compared

with saliency based approaches that estimate visual attention distribution

in photographs. We complement the saliency information extracted from an image

using a high-level semantic segmentation technique that infers the geometric

context of a scene. With the help of the above methods, we extract aesthetic

features that could be used to measure the deviation of a typical composition

from ideal photographic rules of composition.

These aesthetic features are subsequently used as input to two independent Support Vector Regressors in order to learn the visual aesthetic model. This learned model is then integrated into our photo-composition enhancement framework. To this end, we make the following contributions in this article: (1) perform an empirical study on visual aesthetics using real human subjects on real-world images; (2) find a smooth mapping between user input visual attractiveness and high-level aesthetic features; (3) apply semantic scene constraints while recompositing a photograph; (4) introduce an interactive tool that helps users to recompose photographs with some informed aesthetic feedback; and (5) bring photographic quality assessment and enhancement under a single unifying framework.

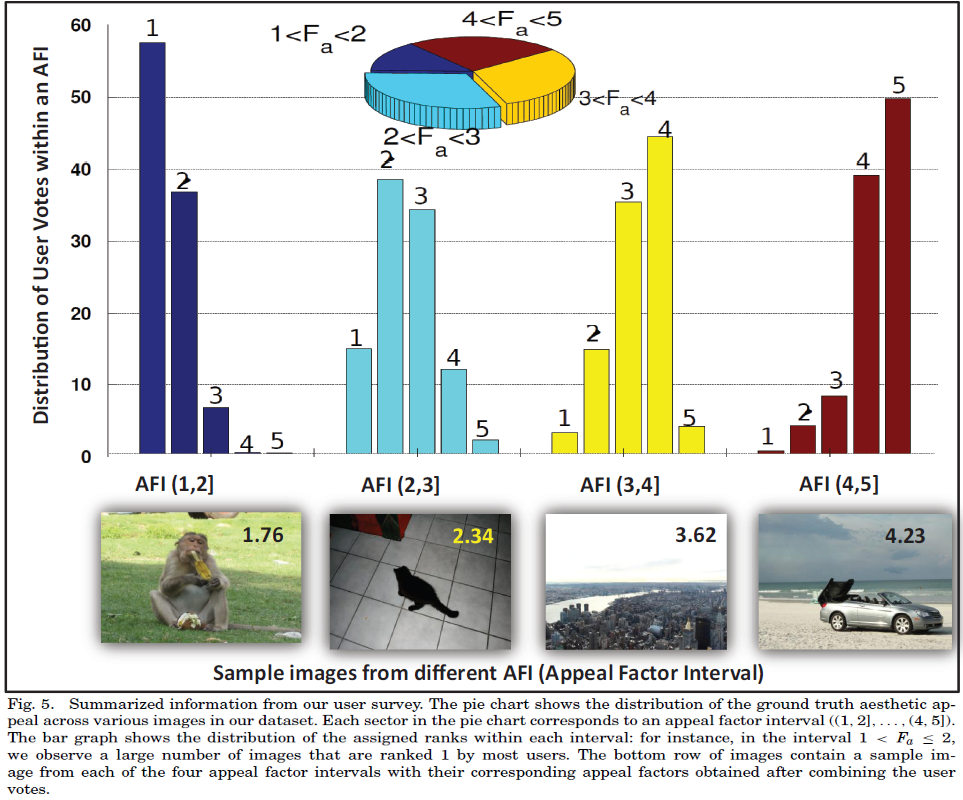

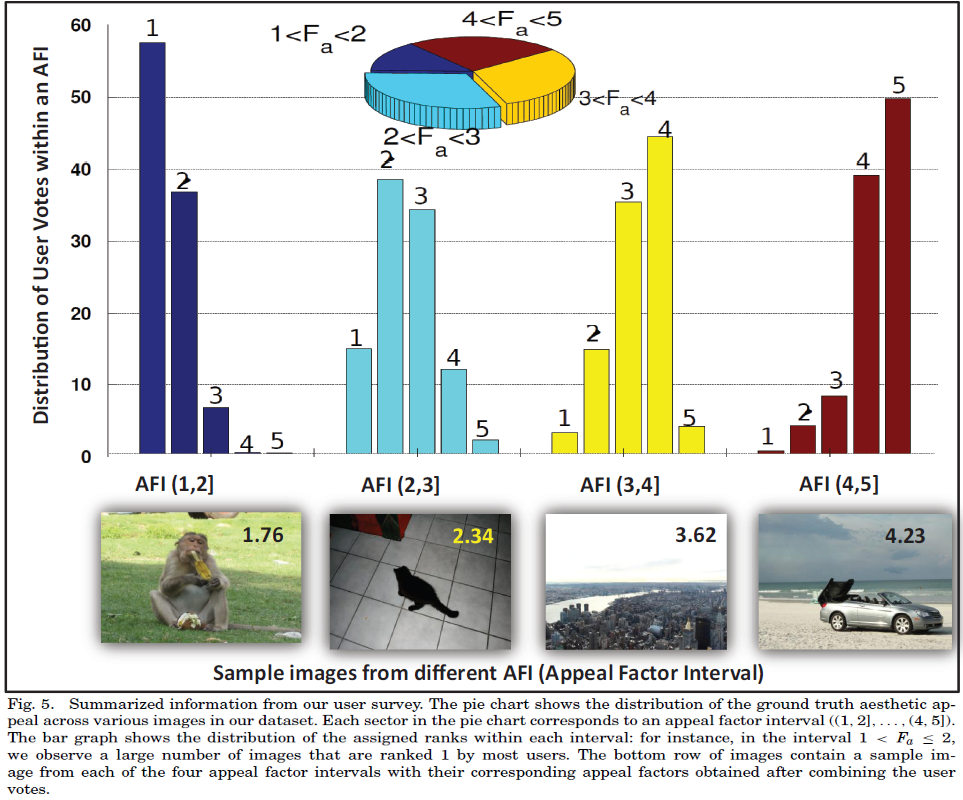

We conducted a thorough study of human aesthetics through a survey where 15 independent participants were asked to assign integer ranks to the photographs in our dataset from 1 to 5, with 5 being assigned to the most appealing. Further, while ranking, users were specifically instructed to eliminate bias from their ratings that might have emerged due to individual subject matter contained in a photograph, for instance, whether a user prefers mountains to sea or birds to animals. Each user was asked to rank no more than 30 images in a particular sitting in order to avoid undesirable variances in the ranking system due to fatigue or boredom. This process was further repeated 5 times to eliminate rankings from inconsistent users. After discarding the scores assigned by inconsistent users, we observed that the distributions were typically unimodal with low variance, enabling us to generate a single ground truth aesthetic appeal factor for each image (Fa) by averaging its assigned scores.

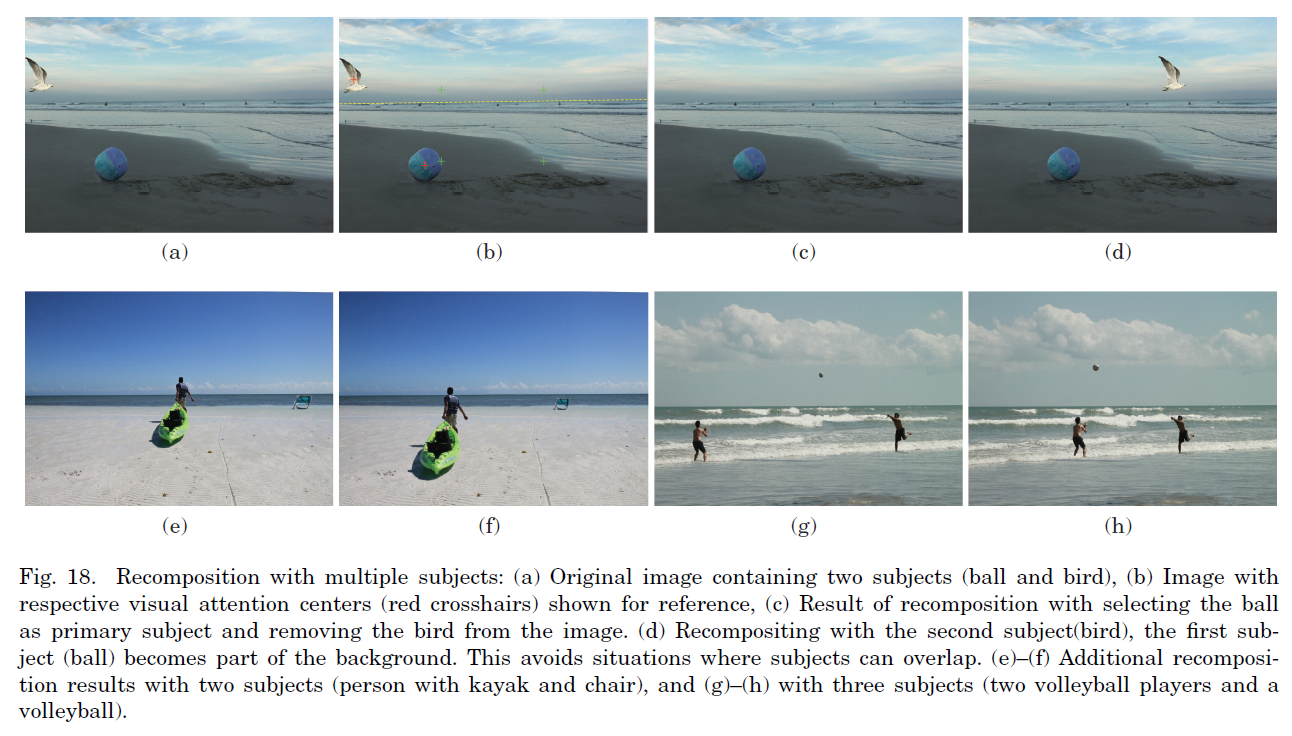

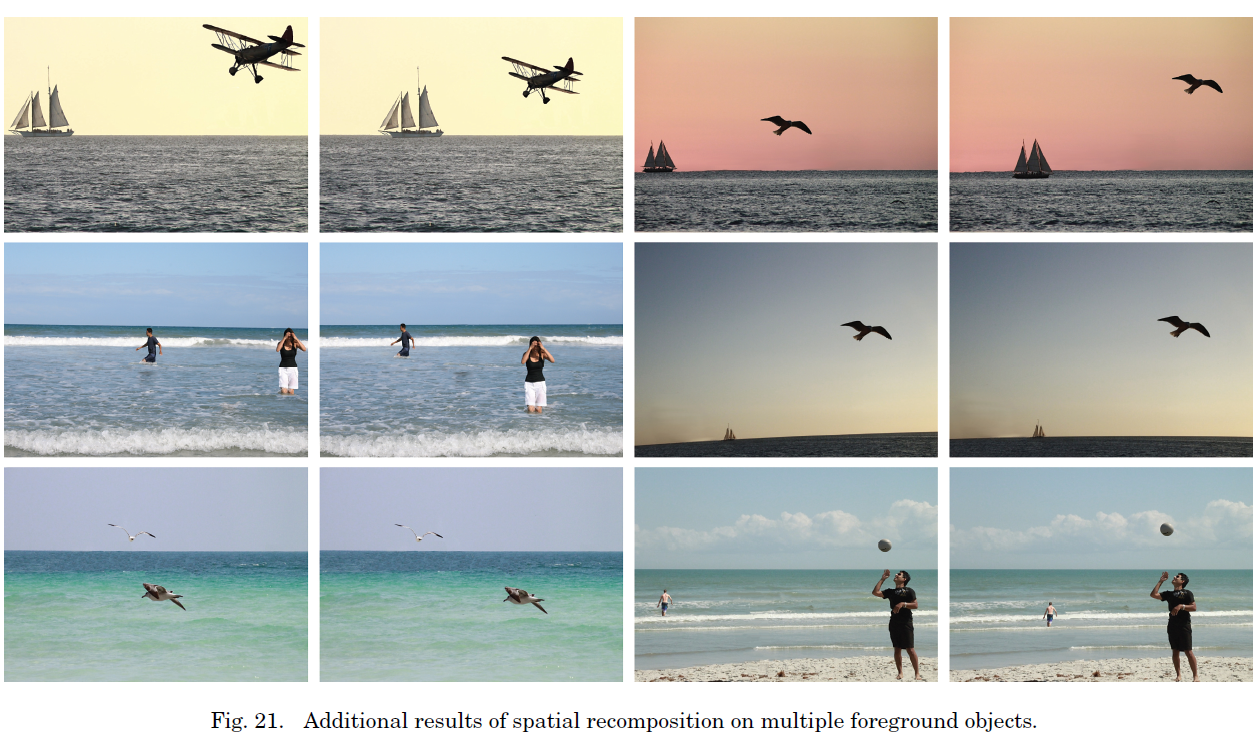

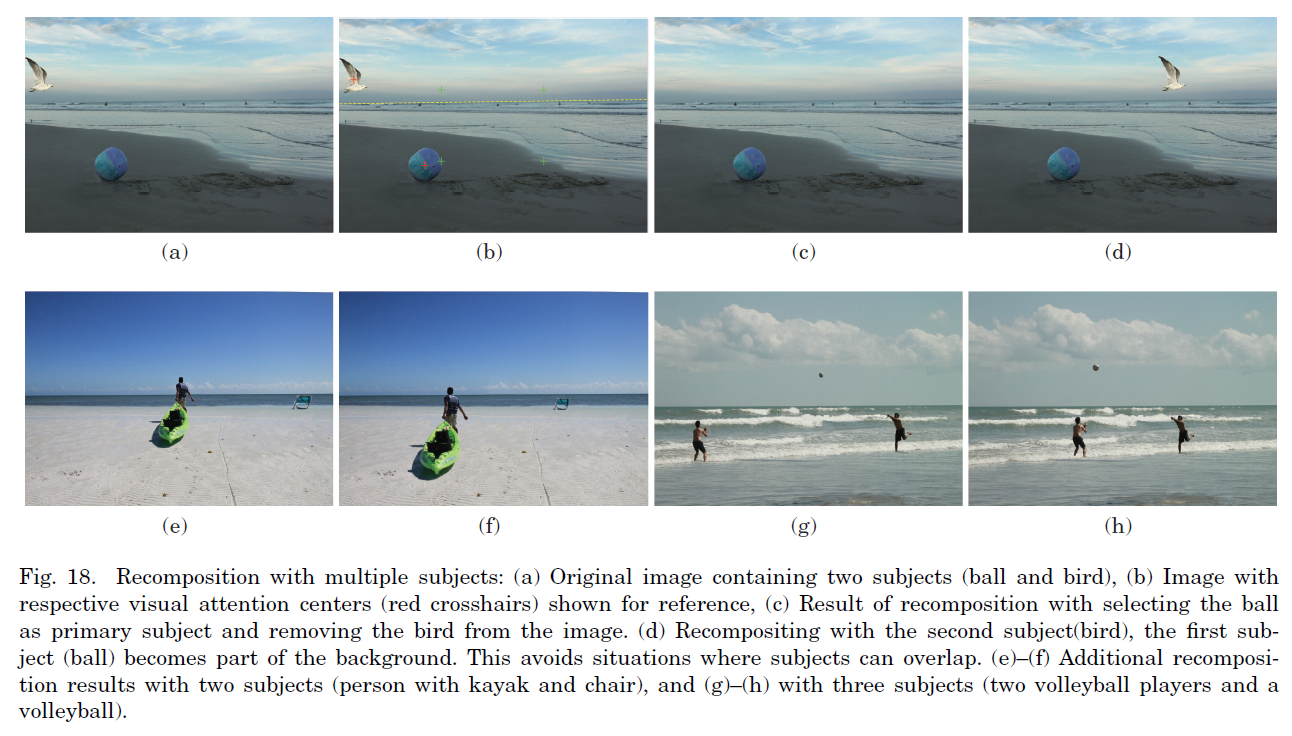

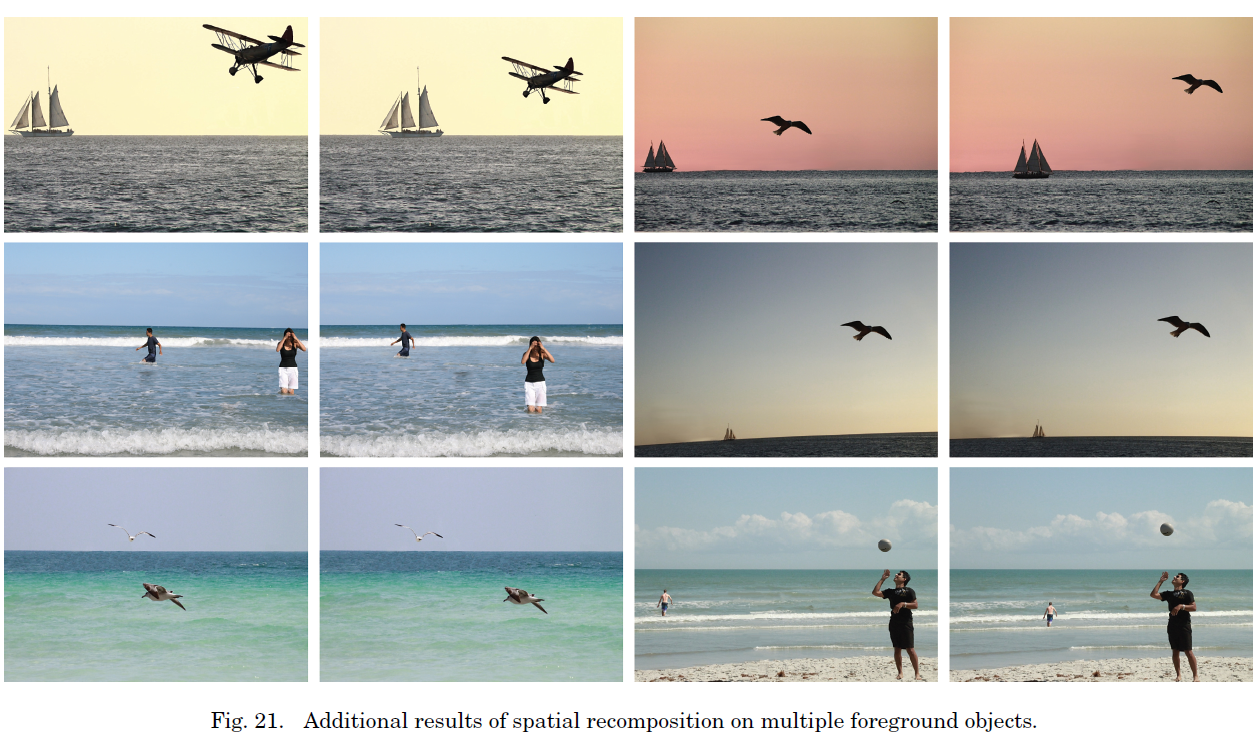

We primarily focus on outdoor photographic compositions with one or more foreground subjects or compositions with no dominant foreground subjects. For the former, we constrain our algorithm to relocate the objects to a more aesthetically pleasing location while respecting the scene semantics (e.g., a tree attached to the ground must remain in contact with the ground) and rescaling it as necessary to maintain the scene’s perspective. This is a significant improvement over a foreground object-centric image-editing technique, wheich reconstructs an image from low-resolution patches subject to user-defined constraints. We also show that how our technique can be extended to handle multiple foreground objects. In the case of photographs that lack a dominant subject, such as land/seascapes, we crop or expand the photograph so that an aesthetically pleasing balance between sky and land/sea is achieved.

Code/Data

Relevant Publications

[1] Subhabrata Bhattacharya, Rahul Sukthankar, Mubarak Shah, "A holistic approach to aesthetic enhancement of photographs", In Transactions of Multimedia Computing, Communications and Applications (TOMCCAP), vol. 7, no. Supplement, pp. 21:1-21:21, 2011.

[2] Subhabrata Bhattacharya, Rahul Sukthankar, Mubarak Shah, "A framework for photo-quality assessment and enhancement based on visual aesthetics", In Proc. of ACM International Conference on Multimedia (MM), Florence, IT, pp. 271-280, 2010. [Best Paper Nominee, Oral, 4/165]

These aesthetic features are subsequently used as input to two independent Support Vector Regressors in order to learn the visual aesthetic model. This learned model is then integrated into our photo-composition enhancement framework. To this end, we make the following contributions in this article: (1) perform an empirical study on visual aesthetics using real human subjects on real-world images; (2) find a smooth mapping between user input visual attractiveness and high-level aesthetic features; (3) apply semantic scene constraints while recompositing a photograph; (4) introduce an interactive tool that helps users to recompose photographs with some informed aesthetic feedback; and (5) bring photographic quality assessment and enhancement under a single unifying framework.

We conducted a thorough study of human aesthetics through a survey where 15 independent participants were asked to assign integer ranks to the photographs in our dataset from 1 to 5, with 5 being assigned to the most appealing. Further, while ranking, users were specifically instructed to eliminate bias from their ratings that might have emerged due to individual subject matter contained in a photograph, for instance, whether a user prefers mountains to sea or birds to animals. Each user was asked to rank no more than 30 images in a particular sitting in order to avoid undesirable variances in the ranking system due to fatigue or boredom. This process was further repeated 5 times to eliminate rankings from inconsistent users. After discarding the scores assigned by inconsistent users, we observed that the distributions were typically unimodal with low variance, enabling us to generate a single ground truth aesthetic appeal factor for each image (Fa) by averaging its assigned scores.

We primarily focus on outdoor photographic compositions with one or more foreground subjects or compositions with no dominant foreground subjects. For the former, we constrain our algorithm to relocate the objects to a more aesthetically pleasing location while respecting the scene semantics (e.g., a tree attached to the ground must remain in contact with the ground) and rescaling it as necessary to maintain the scene’s perspective. This is a significant improvement over a foreground object-centric image-editing technique, wheich reconstructs an image from low-resolution patches subject to user-defined constraints. We also show that how our technique can be extended to handle multiple foreground objects. In the case of photographs that lack a dominant subject, such as land/seascapes, we crop or expand the photograph so that an aesthetically pleasing balance between sky and land/sea is achieved.

Code/Data

A subset of the data alongwith ground truth annotations are available here.

Relevant Publications

[1] Subhabrata Bhattacharya, Rahul Sukthankar, Mubarak Shah, "A holistic approach to aesthetic enhancement of photographs", In Transactions of Multimedia Computing, Communications and Applications (TOMCCAP), vol. 7, no. Supplement, pp. 21:1-21:21, 2011.

[2] Subhabrata Bhattacharya, Rahul Sukthankar, Mubarak Shah, "A framework for photo-quality assessment and enhancement based on visual aesthetics", In Proc. of ACM International Conference on Multimedia (MM), Florence, IT, pp. 271-280, 2010. [Best Paper Nominee, Oral, 4/165]