What do Humans look for while labeling videos for Events?

Abstract

This paper addresses the fundamental question – How do humans

recognize complex events in videos? Normally, humans view videos

in a sequential manner. We hypothesize that humans can make

high-level inference such as an event is present or not in a video, by

looking at a very small number of frames not necessarily in a linear

order. We attempt to verify this cognitive capability of humans and

to discover the Minimally Needed Evidence (MNE) for each event.

To this end, we introduce an online game based event quiz facilitating

selection of minimal evidence required by humans to judge the

presence or absence of a complex event in an open source video.

Each video is divided into a set of temporally coherent microshots

(1:5 secs in length) which are revealed only on player request. The

player’s task is to identify the positive and negative occurrences of

the given target event with minimal number of requests to reveal

evidence. Incentives are given to players for correct identification

with the minimal number of requests.

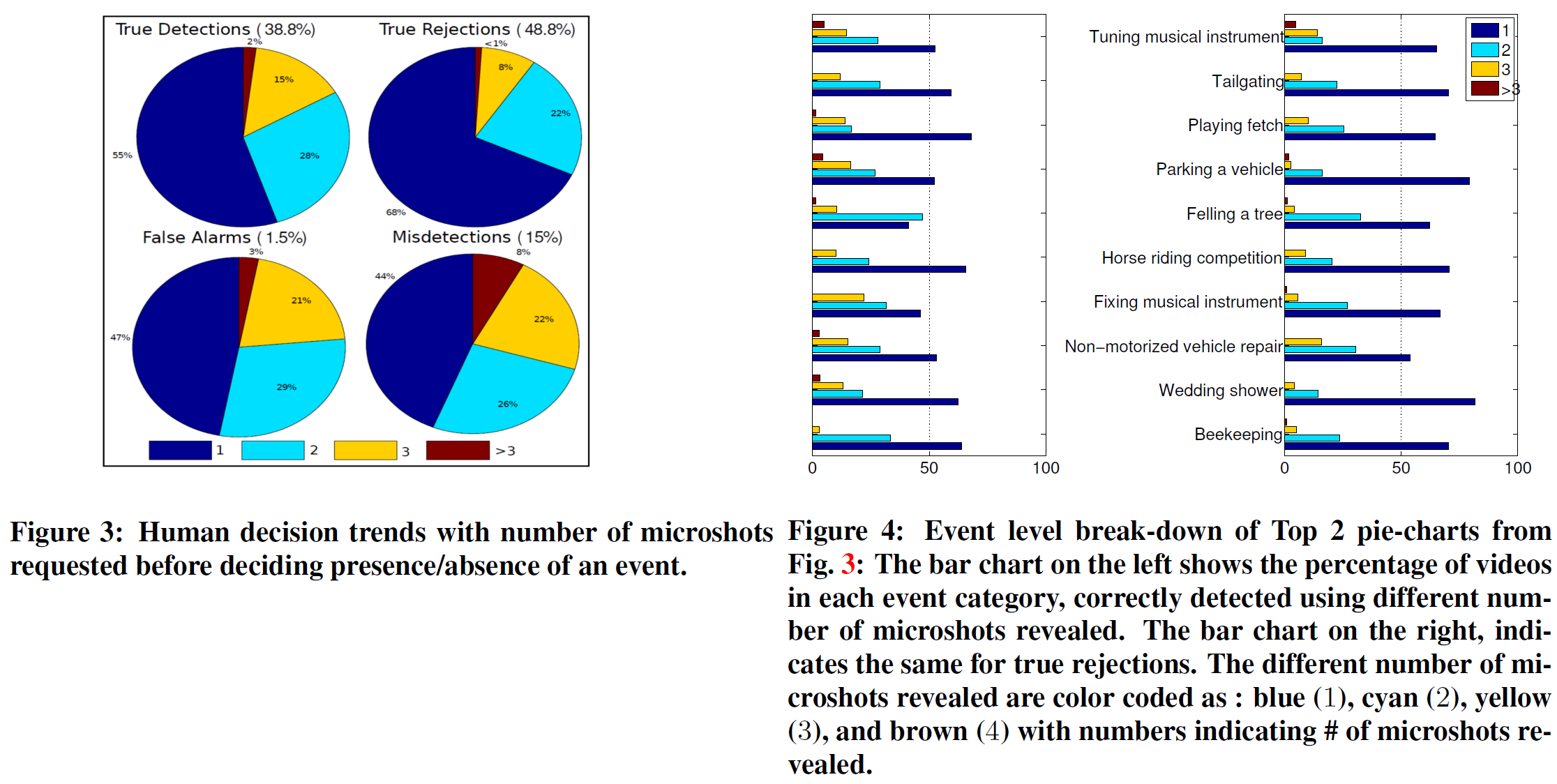

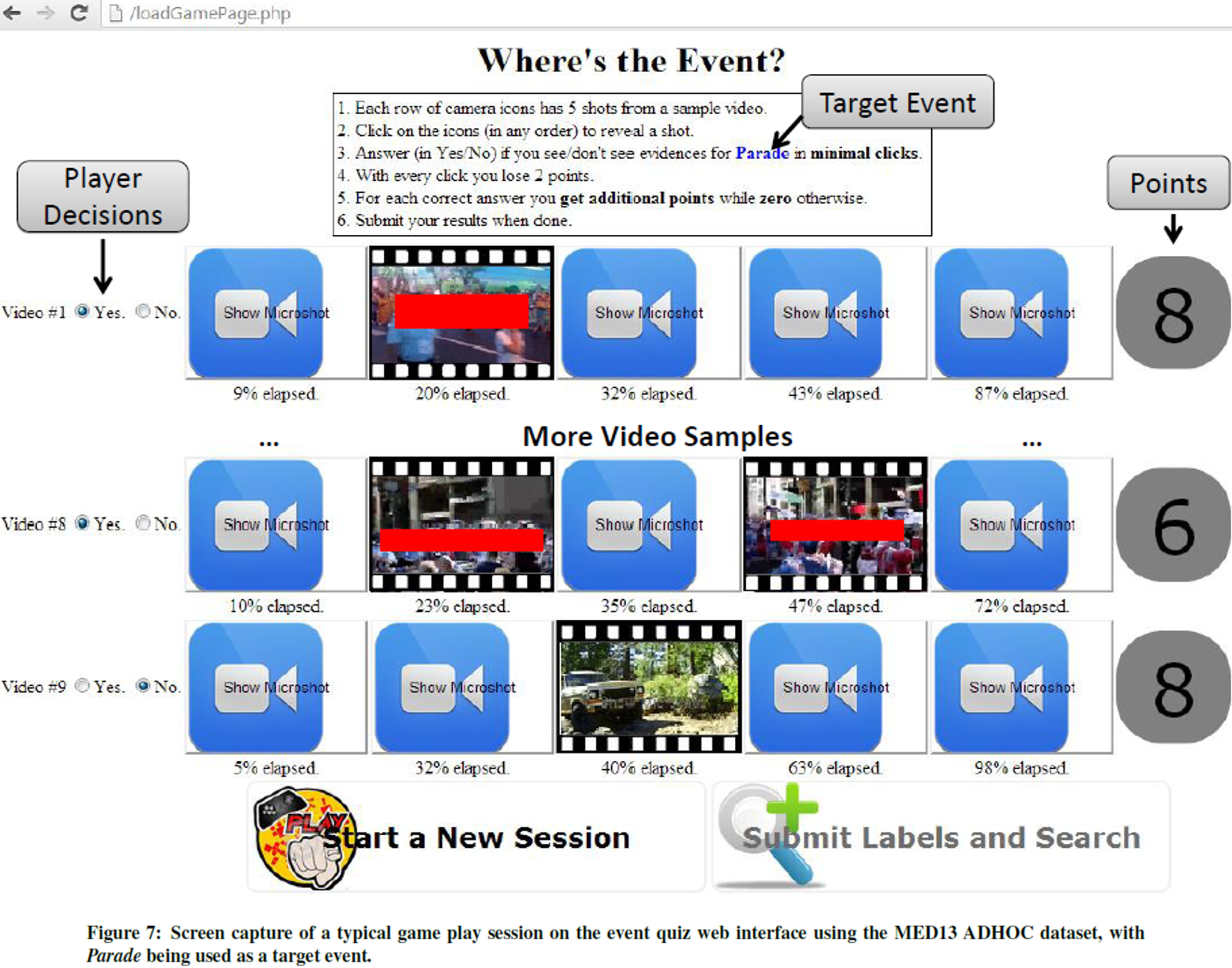

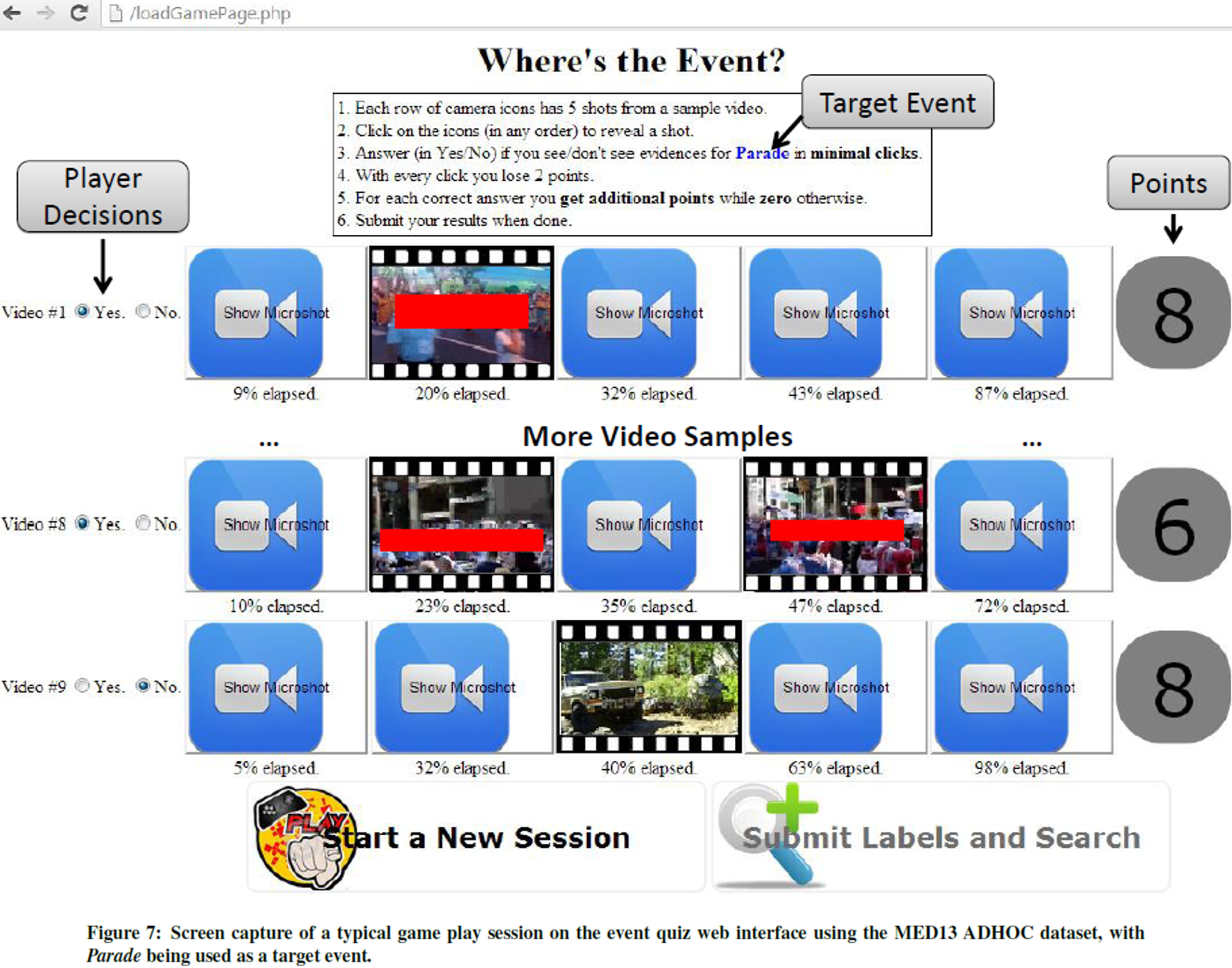

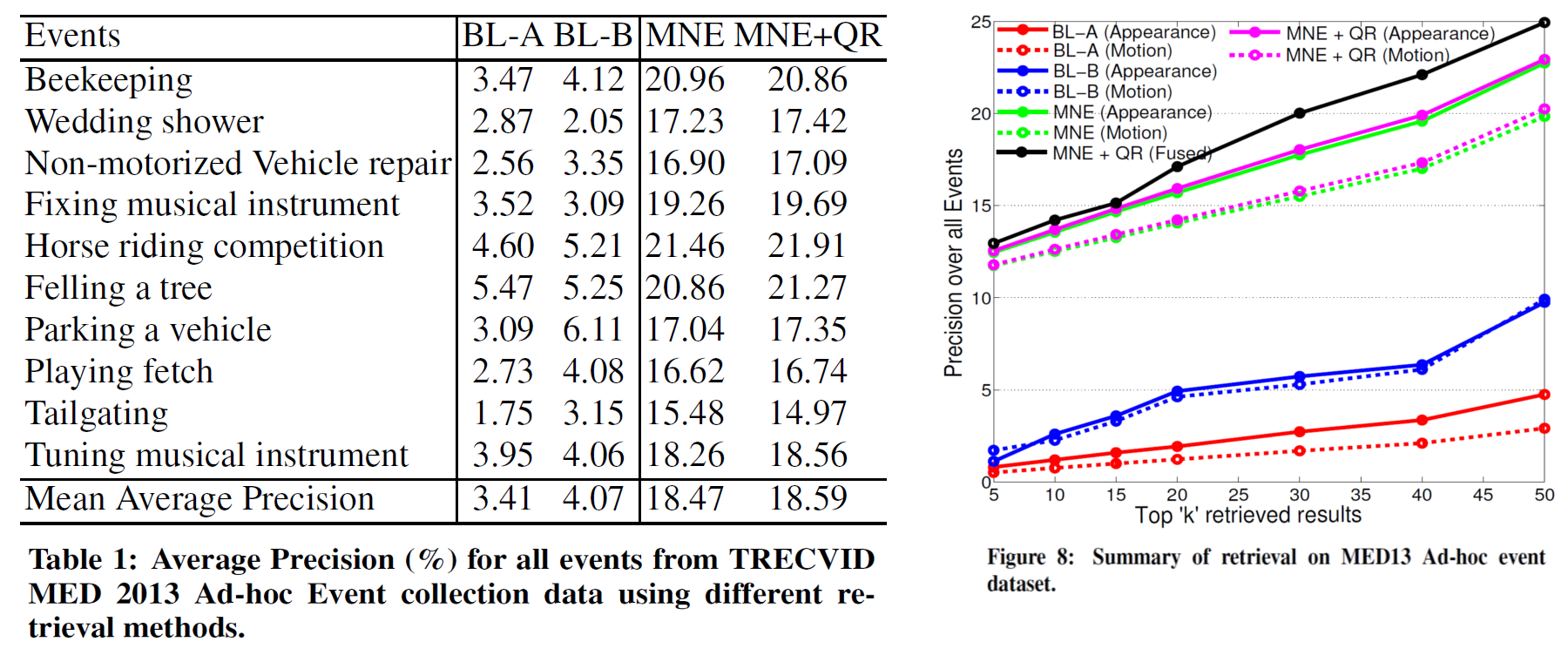

Our extensive human study using the game quiz validates our hypothesis - 55% of videos need only one microshot for correct human judgment and events of varying complexity require different amounts of evidence for human judgment. In addition, the proposed notion of MNE enables us to select discriminative features, drastically improving speed and accuracy of a video retrieval system.

Our extensive human study using the game quiz validates our hypothesis - 55% of videos need only one microshot for correct human judgment and events of varying complexity require different amounts of evidence for human judgment. In addition, the proposed notion of MNE enables us to select discriminative features, drastically improving speed and accuracy of a video retrieval system.

Method Summary and Results

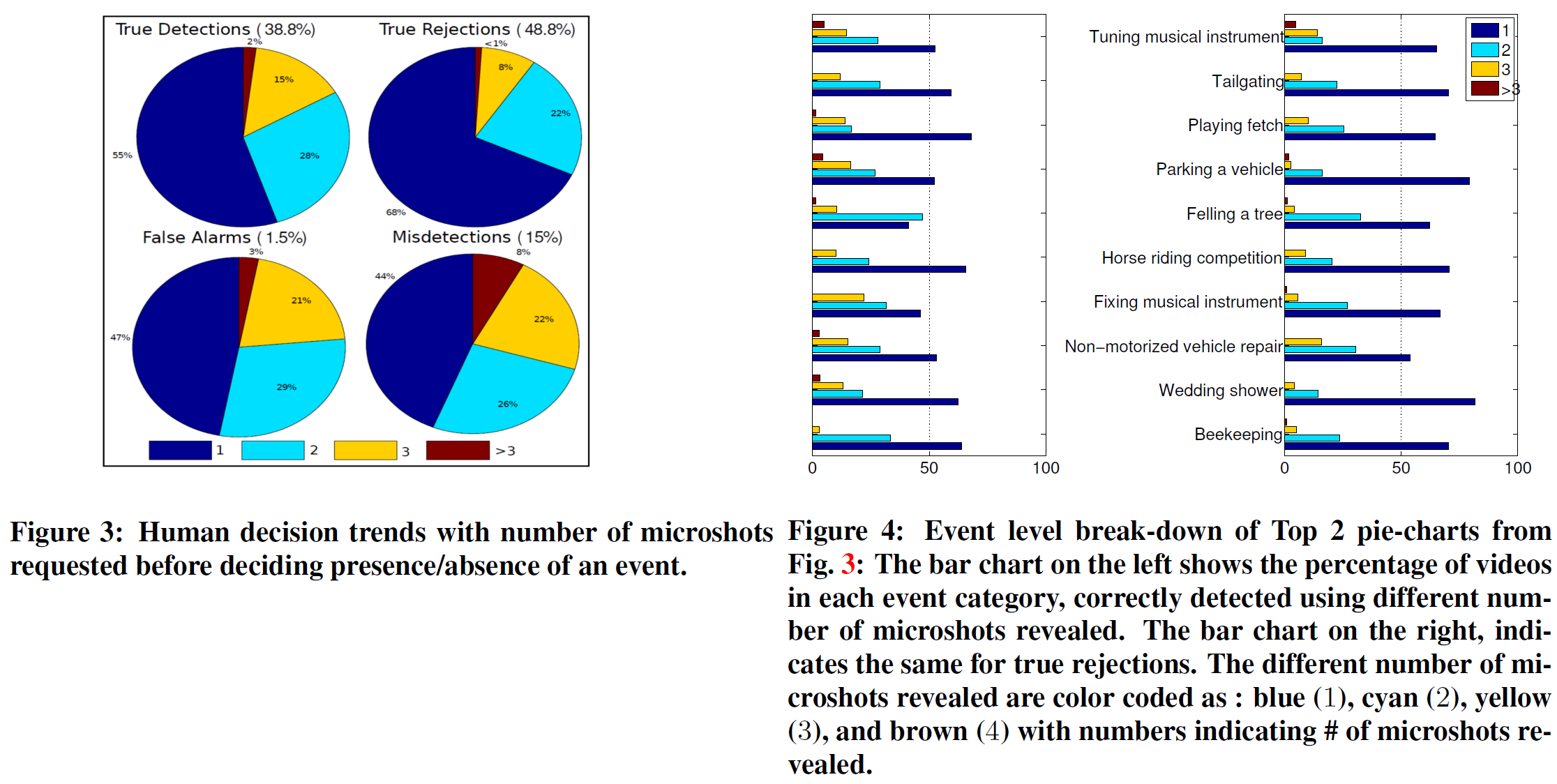

To this end, we develop the Event Quiz Interface (EQI), an interactive

tool mimicking some of the key strategies employed by the human cognition

system for high-level understanding of complex events in unconstrained web

videos. EQI enables us conduct an extensive study on human perception of

events through which we put forth the notion of Minimally Needed Evidence (MNE)

to predict the presence or absence of an event in a video. MNEs are a

collection of microshots (set of spatially downsampled contiguous frames) from

a video. Note that our emphasis on scaled down version of original frames, is

coherent to the term Minimal, in this context. Our study reinforces our

hypothesis that in a majority of cases humans make correct decisions about an

event with just a small number of frames. It reveals that a single microshot

serves as an MNE in 55% of test videos to identify an event correctly and

68% of videos can be correctly rejected after humans had viewed only a single

microshot.

We make the following technical contributions in this paper: (a) We propose a novel game based interface to study human cognition of video events, which can also be used in a variety of tasks in complex event recognition that involve human supervision, (b) Based on our extensive study, we demonstrate that humans can recognize complex events by seeing one or two chunks of spatially downsampled shots, less than a couple seconds in length, (c) We leverage on positive and negative visual cues selected by humans for efficient retrieval, and finally, (d) We perform conclusive experiments to demonstrate significant improvement in event retrieval performance on two challenging datasets released under TRECVID using off-the-shelf feature extraction techniques applied on MNEs versus evidence collected sequentially.

In addition, we further conjecture that the utility of MNEs can be perceived beyond complex event recognition. They can be used to drive tasks including feature extraction, fine-grained annotation and detection of concepts. Furthermore, they can also be used to investigate if underlying temporal structure in a video is useful for event recognition.

We make the following technical contributions in this paper: (a) We propose a novel game based interface to study human cognition of video events, which can also be used in a variety of tasks in complex event recognition that involve human supervision, (b) Based on our extensive study, we demonstrate that humans can recognize complex events by seeing one or two chunks of spatially downsampled shots, less than a couple seconds in length, (c) We leverage on positive and negative visual cues selected by humans for efficient retrieval, and finally, (d) We perform conclusive experiments to demonstrate significant improvement in event retrieval performance on two challenging datasets released under TRECVID using off-the-shelf feature extraction techniques applied on MNEs versus evidence collected sequentially.

In addition, we further conjecture that the utility of MNEs can be perceived beyond complex event recognition. They can be used to drive tasks including feature extraction, fine-grained annotation and detection of concepts. Furthermore, they can also be used to investigate if underlying temporal structure in a video is useful for event recognition.

Code/Data

Software for Event Quiz Interface is available here. Dataset

is available from TRECVID MED 2013 collection upon request from NIST.

Relevant Publications

- Subhabrata Bhattacharya, Felix Yu, Shih-Fu Chang, "Minimally Needed Evidence for Complex Event Recognition in Unconstrained Videos", In Proc. of ACM International Conference on Multimedia Retrieval (ICMR), Glasgow, UK, pp. pp-pp, 2014. [Best Paper (1/21), Oral, Acceptance Rate 19%]