Cinematographic Shot Classification using Lie Algebra

Abstract

In this paper, we propose a discriminative representation

of a video shot based on its camera motion and demonstrate

how the representation can be used for high level multimedia tasks

like complex event recognition. In our technique, we assume that

a homography exists between a pair of subsequent frames in a

given shot. Using purely image-based methods, we compute homography

parameters that serve as coarse indicators of the ambient

camera motion. Next, using Lie algebra, we map the homography

matrices to an intermediate vector space that preserves the

intrinsic geometric structure of the transformation. The mappings

are stacked temporally to generate vector time-series per shot. To

extract meaningful features from time-series, we propose an efficient

linear dynamical system based technique. The extracted temporal

features are further used to train linear SVMs as classifiers

for a particular shot class. In addition to demonstrating the efficacy

of our method on a novel dataset, we extend its applicability

to recognize complex events in large scale videos under unconstrained

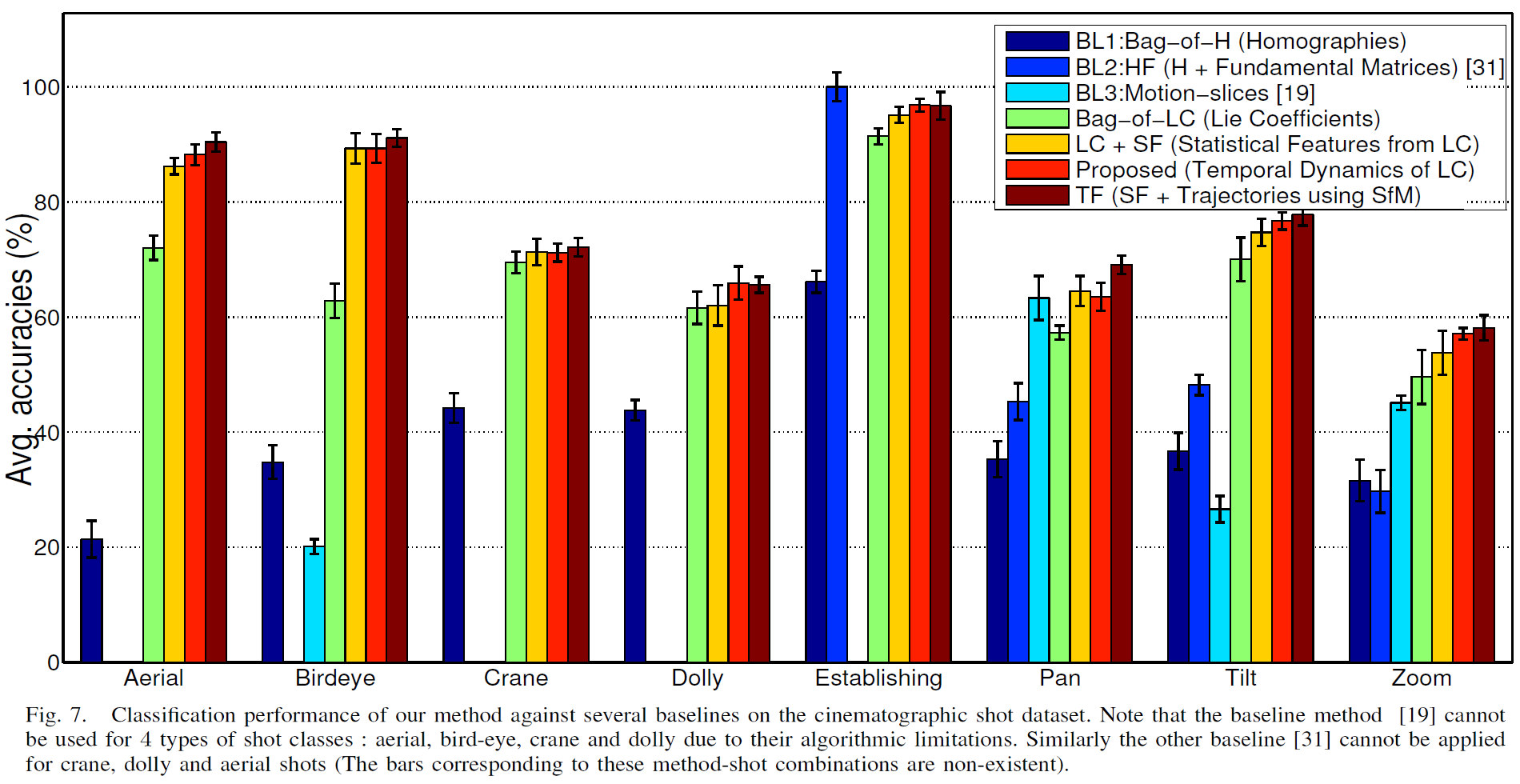

scenarios. Our empirical evaluations on eight cinematographic

shot classes show that our technique performs close to approaches

that involve extraction of 3-D trajectories using computationally

prohibitive structure from motion techniques.

Method Summary and Results

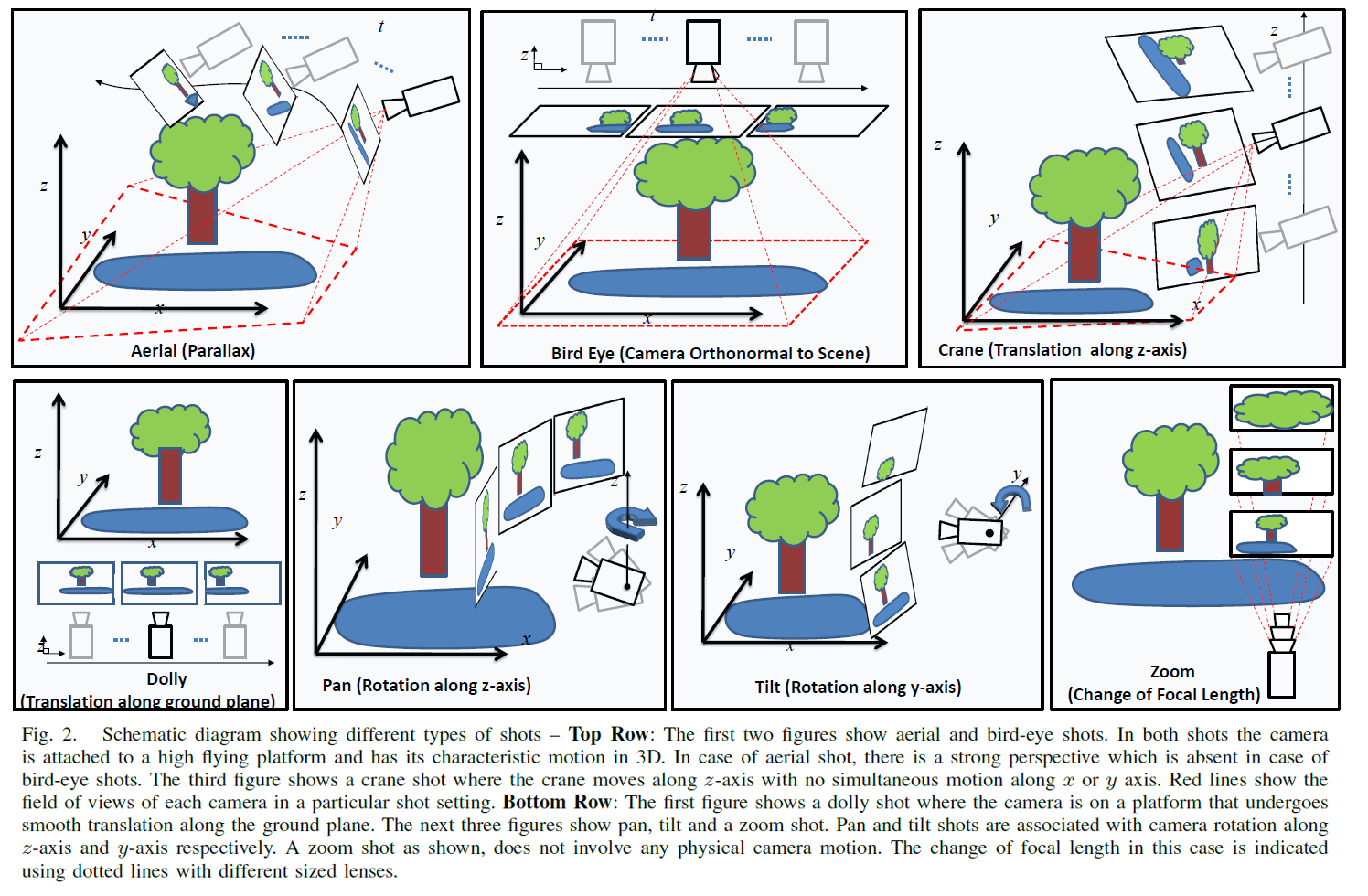

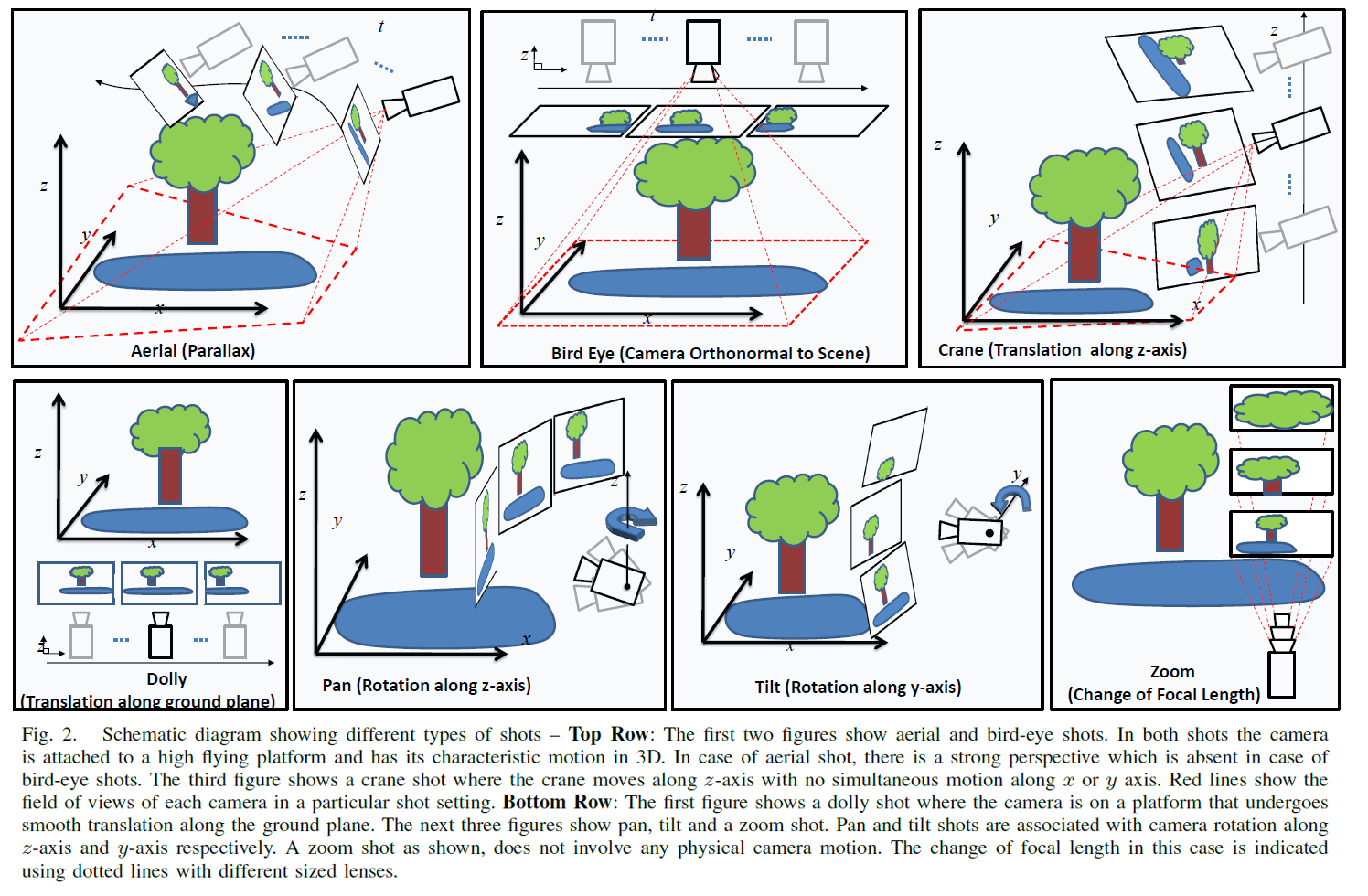

Camera motion in authored videos (commonly pan, tilt or zoom), are directly

correlated with high-level semantic concepts described in the shot. For

example, a tracking shot in which a camera undergoes translation on a moving

platform indicates the presence of a following concept. Detection of such

useful concepts can be used by current video search engines at a later stage to

perform high-level content analysis such as detection of events from videos.

This motivates us to explore the possibilities of using pure camera motion to

solve the shot classification problem. Camera motion parameters, also known as

telemetry, are very difficult to obtain directly as few video cameras are

equipped with sophisticated sensors that can provide such accurate

measurements. Furthermore, telemetry data is not generally available and is

certainly not present in Internet or broadcast video.

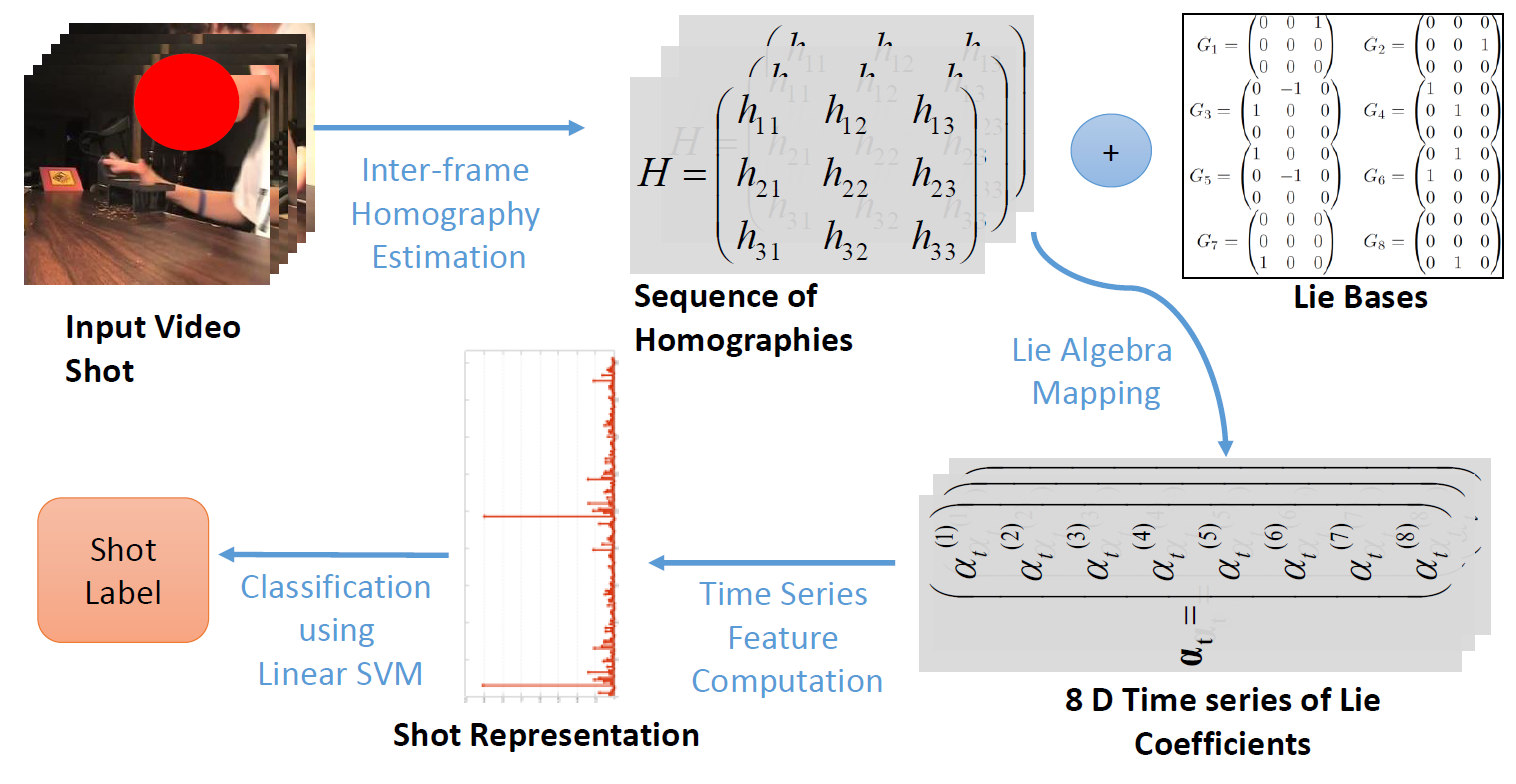

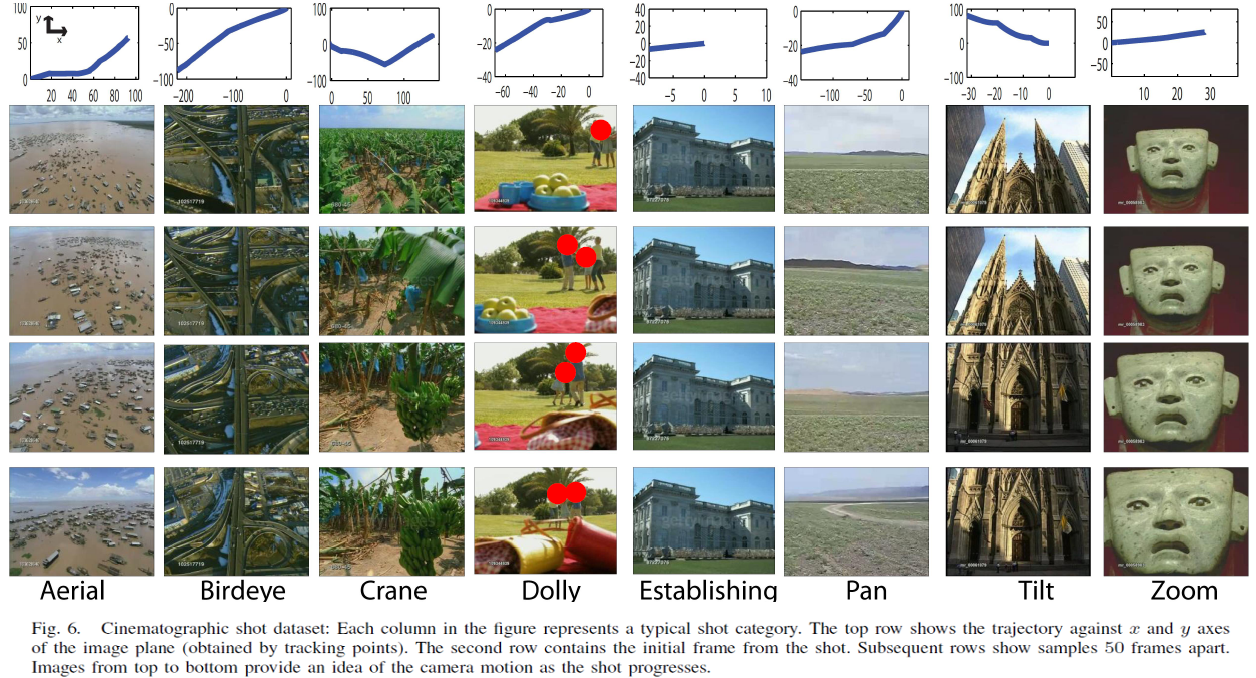

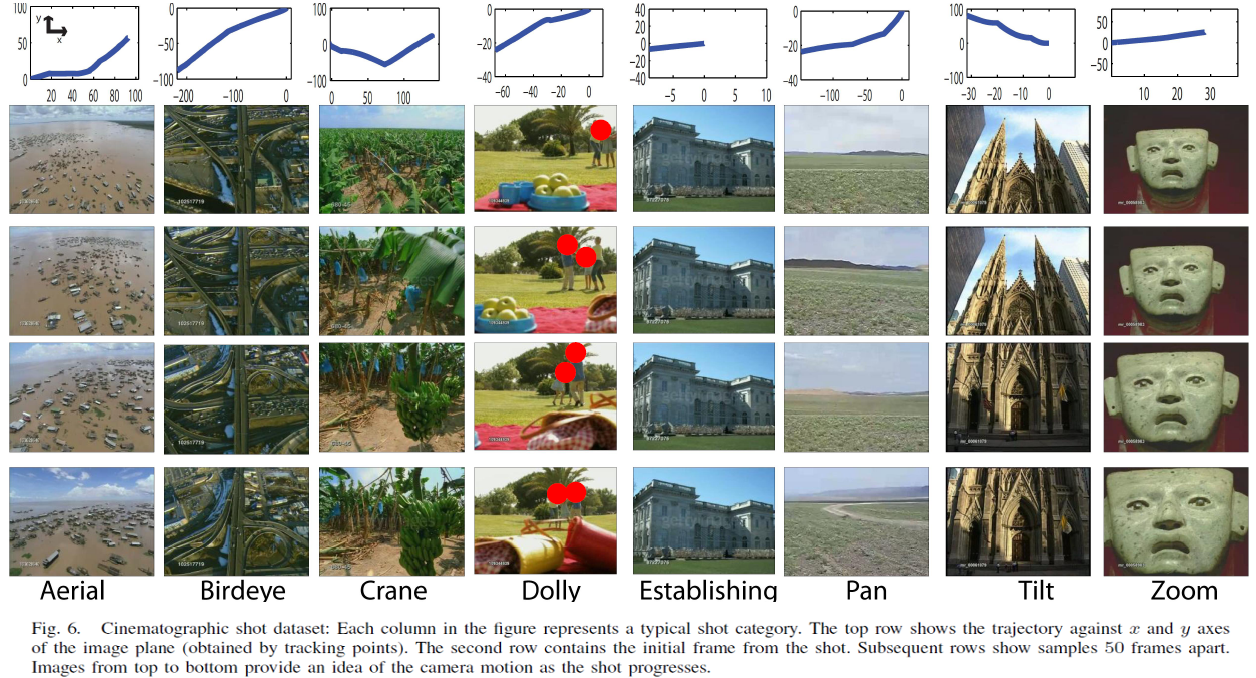

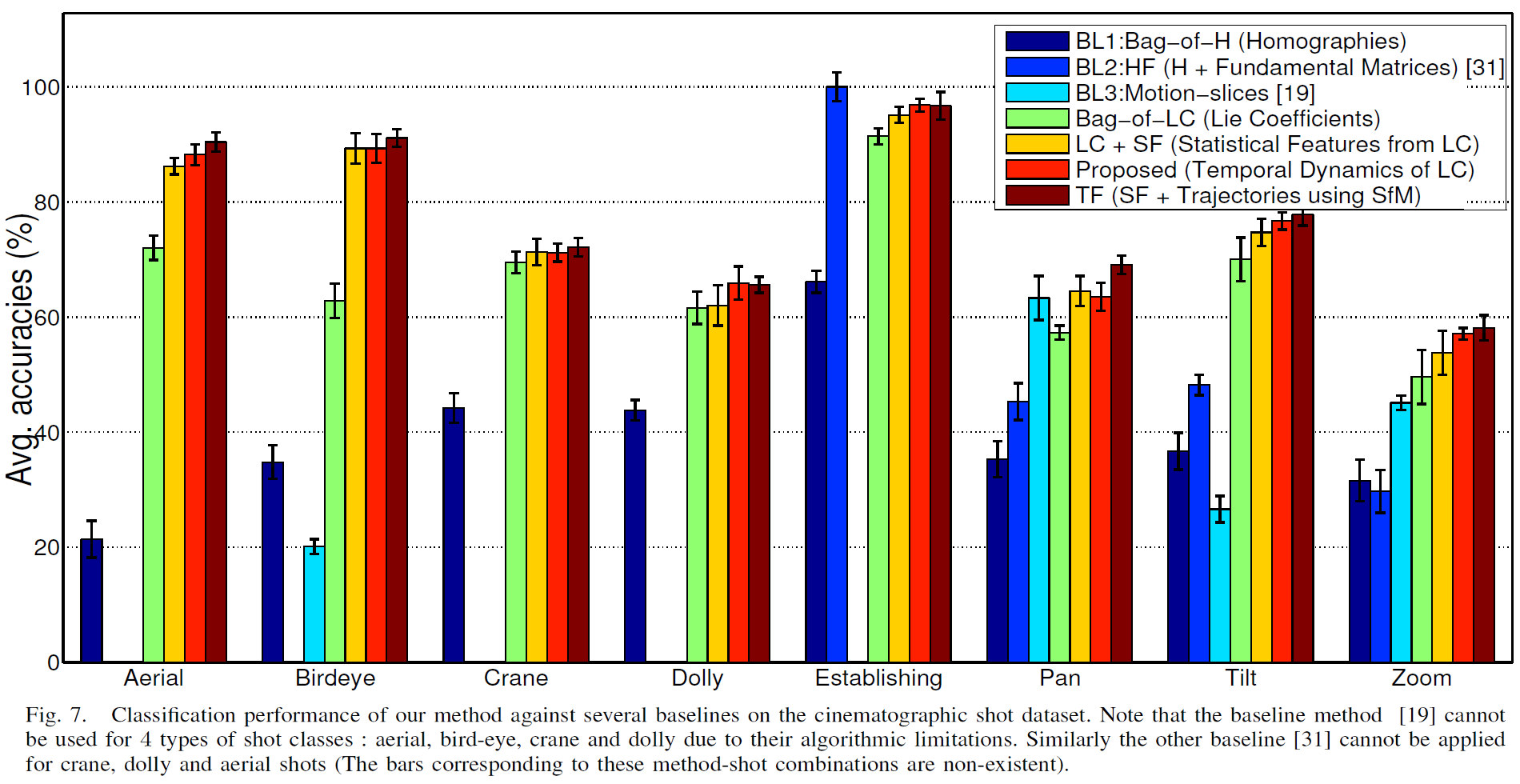

To this end, we propose the following methodology to represent the camera motion extracted from a video: (1) Given a shot, pairwise homographies are computed between the consecutive frames, (2) Next we map them to a linear space using Lie algebra defined under Projective Group (3) Coefficients of this linear space are used to construct multiple time series (4) Representative features are computed from these time series for discriminative classification.

In this paper, we make the following contributions: (1)We obtain global camera motion by robustly estimating frame to frame homographies unlike approaches that rely on local optical flow based techniques, which are often noisy or full structure from motion based approach, which is computationally expensive, (2) Compared to approaches that use homographies directly for classification, our lie-algebra based representation homographies is more accurate, (3) Our global features computed from a shot consider temporal continuity between frames, are superior to orderless bag of words techniques, thereby eliminating any need for explicit temporal alignment of shots of unequal lengths, (4) Our representation is capable of classifying a broader category of shots as compared to earlier approaches. Our dataset consists of eight cinematographic shot classes which we are freely distributing to the research community, (5) Our method is more versatile than approaches which apply to specific domains such as movies or sports. It also requires fewer parameters to adjust as compared to approaches which require explicit motion segmentation, and (6) Finally, this is the first work to show how our novel camera motion representation can be used as a complementary feature for recognition of complex events in unconstrained Internet videos.

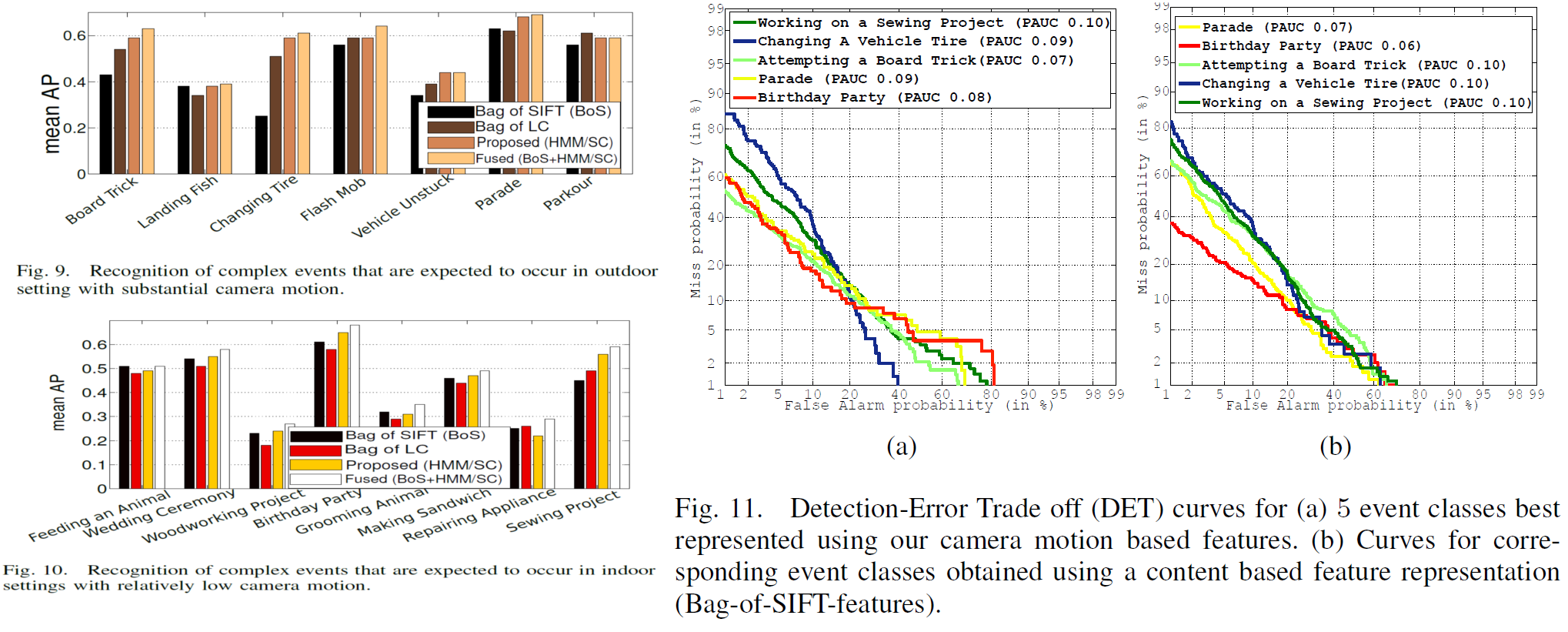

We perform exhaustive comparative analysis of event recognition performance for two separate cases. In the former case, we report the average precision of event classifiers on events that are commonly observed in outdoor settings involving significant camera motion. The results are reported in Fig. 9. The latter case involves events that are typically expected to occur in indoor settings, accompanied by limited camera motion. Fig. 10 reports results corresponding to these events. Among outdoor events, “Attempting a Board trick”, “Changing a Vehicle tire”, and “Parade” are well detected using our proposed HMM based approach on top of the predefined shot classifier responses (HMM/SC). While in case of indoor events, “Birthday party” and “Working on a sewing project” are detected with high avg. precision. We also notice that in all event cases, late fusion with a content based classifier, improves the result by 3-4%, which supplies strong evidence towards the complementary nature of our feature. Interestingly, classifiers trained on Bag-of-LC only achieve 3.5-5% lower than HMM/SC. Thus, for even larger datasets, we can obtain a decent trade-off between speed and accuracy by eliminating the full shot classification followed by HMM training step, opting for a simpler approach.

To this end, we propose the following methodology to represent the camera motion extracted from a video: (1) Given a shot, pairwise homographies are computed between the consecutive frames, (2) Next we map them to a linear space using Lie algebra defined under Projective Group (3) Coefficients of this linear space are used to construct multiple time series (4) Representative features are computed from these time series for discriminative classification.

In this paper, we make the following contributions: (1)We obtain global camera motion by robustly estimating frame to frame homographies unlike approaches that rely on local optical flow based techniques, which are often noisy or full structure from motion based approach, which is computationally expensive, (2) Compared to approaches that use homographies directly for classification, our lie-algebra based representation homographies is more accurate, (3) Our global features computed from a shot consider temporal continuity between frames, are superior to orderless bag of words techniques, thereby eliminating any need for explicit temporal alignment of shots of unequal lengths, (4) Our representation is capable of classifying a broader category of shots as compared to earlier approaches. Our dataset consists of eight cinematographic shot classes which we are freely distributing to the research community, (5) Our method is more versatile than approaches which apply to specific domains such as movies or sports. It also requires fewer parameters to adjust as compared to approaches which require explicit motion segmentation, and (6) Finally, this is the first work to show how our novel camera motion representation can be used as a complementary feature for recognition of complex events in unconstrained Internet videos.

We perform exhaustive comparative analysis of event recognition performance for two separate cases. In the former case, we report the average precision of event classifiers on events that are commonly observed in outdoor settings involving significant camera motion. The results are reported in Fig. 9. The latter case involves events that are typically expected to occur in indoor settings, accompanied by limited camera motion. Fig. 10 reports results corresponding to these events. Among outdoor events, “Attempting a Board trick”, “Changing a Vehicle tire”, and “Parade” are well detected using our proposed HMM based approach on top of the predefined shot classifier responses (HMM/SC). While in case of indoor events, “Birthday party” and “Working on a sewing project” are detected with high avg. precision. We also notice that in all event cases, late fusion with a content based classifier, improves the result by 3-4%, which supplies strong evidence towards the complementary nature of our feature. Interestingly, classifiers trained on Bag-of-LC only achieve 3.5-5% lower than HMM/SC. Thus, for even larger datasets, we can obtain a decent trade-off between speed and accuracy by eliminating the full shot classification followed by HMM training step, opting for a simpler approach.

Code/Data

Software for Camera Motion descriptor is available here.

Cinematographic and wild shot class dataset is available here.

Multimedia Event Detection Dataset is available from TRECVID MED 2013

collection upon request from NIST.

Relevant Publications

- Subhabrata Bhattacharya, Ramin Mehran, Rahul Sukthankar, Mubarak Shah, "Cinematographic Shot Classification and its Application to Complex Event Recognition", In IEEE Transactions on Multimedia (TMM), vol. 16, no. 3, pp. 686-696, 2014. [UCF 2013 Graduate Engineering and Simulation Excellance Award, $800]