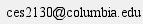

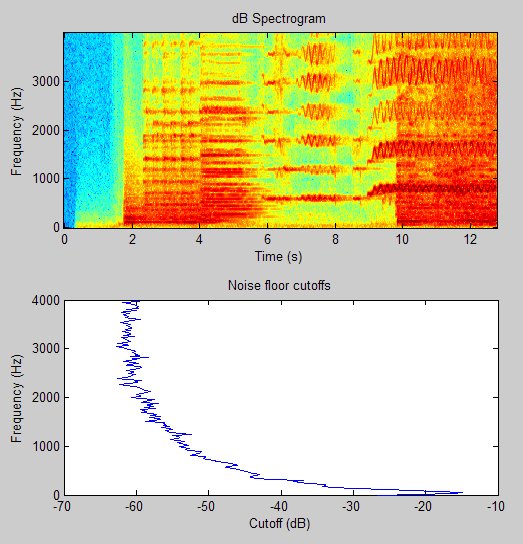

The problem then, of course, was figuring out what "small" was. In a perfect world, small would be zero. Unfortunately, this is not a perfect world and the signals had some extraneous noise. I assumed that the noise power would be approximately the same across time, but different for different frequencies. So, after taking my spectrogram, I calculated the tenth percentile of the decibels in each frequency. This I took to be my cutoff magnitude for that frequency. Here are the cut offs I calculated along with the spectrogram for comparison:

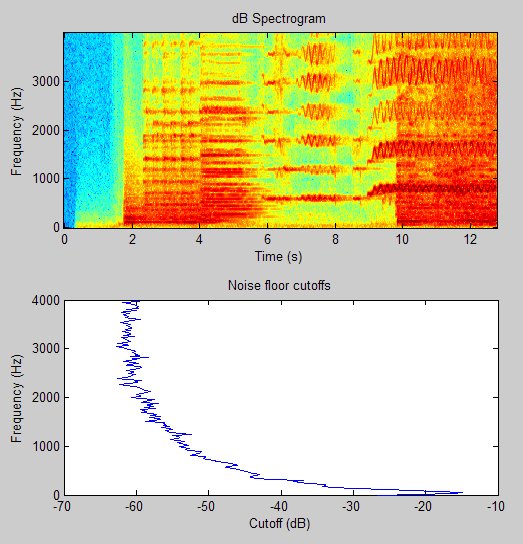

Once I had my cut offs, I just had to apply them. Basically, I counted how many close-to-zero frequencies there were in each time slice. It turned out, however, that I couldn't just take raw counts because they fluctuated quite broadly. Music, of course, does not tend to fluctuate so rapidly. So, I put the raw counts through a smoothing algorithm. Here are the results of applying the cut offs:

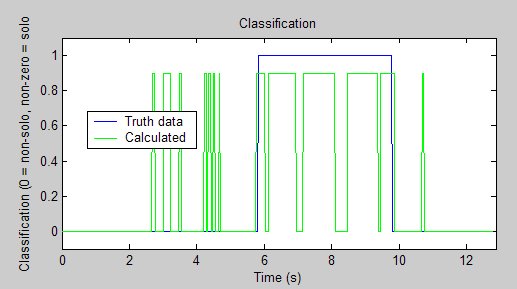

So, how did the classification do? Let's take a look:

Well, it's not wonderful, but it's not too bad. The classifier correctly identified 81% of the solo music as solo and 91% of the non-solo music as non-solo.

<<Prev Up Next>>Christine Smit