Image/Video Search Reranking via Information Bottleneck Principle

Summary

Video and image retrieval has been an active

and challenging research area thanks to the continuing growth of online video data.

Current successful semantic video search approaches usually build upon text search

against text associated with the video content, such as speech transcripts, closed

captions, and video OCR text. The additional use of other available modalities such

as image content, audio, face detection, and high-level concept detection has been

shown to improve upon the text-based video search systems. However, such approaches

require intensive training and might complicate the system too much.

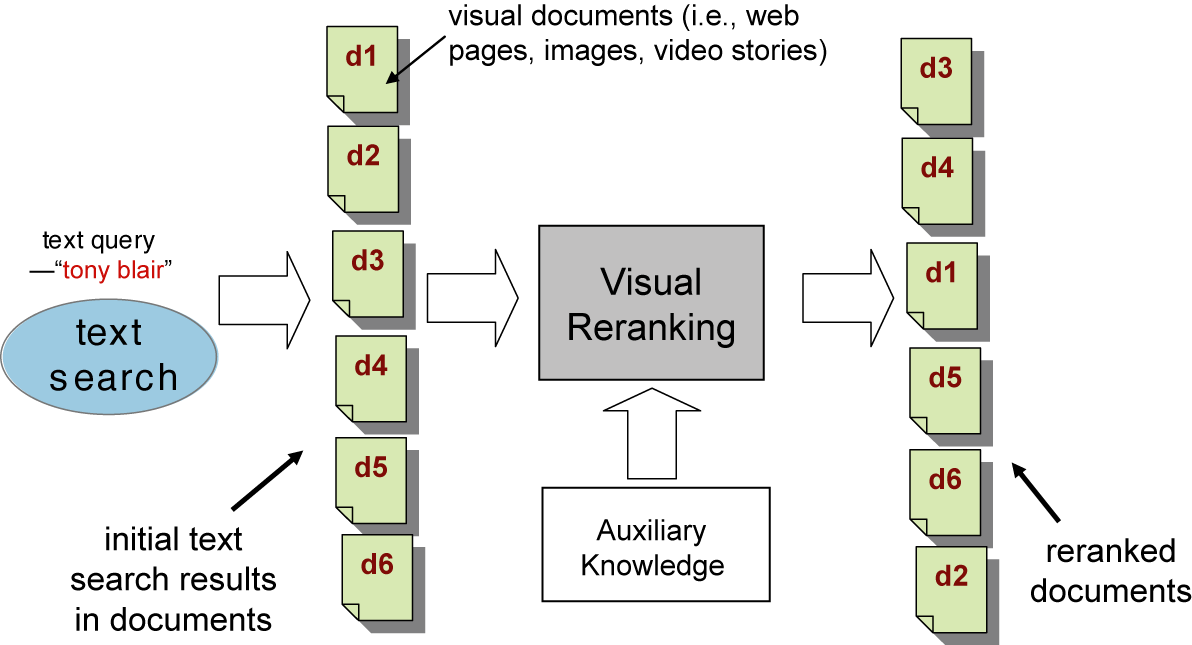

In this project, to ease the problems of example-based approaches and avoid highly-tuned

specific models, we propose a novel and generic video/image reranking algorithm,

IB reranking, which reorders results from text-only searches by discovering the

salient visual patterns of relevant and irrelevant shots from the approximate relevance

provided by text results, as illustrated in the following figure. The IB reranking

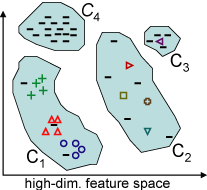

method, based on a rigorous Information Bottleneck (IB) principle, finds the optimal

clustering of images that preserves the maximal mutual information between the search

relevance and the high-dimensional low-level visual features of the images in the

text search results.

Evaluating the approach on the TRECVID 2003-2005 data sets shows significant improvement

upon the text search baseline, with relative increases in average performance of

up to 23%. The method requires no image search examples from the user, but is competitive

with other state-of-the-art example-based approaches. The method is also highly

generic and performs comparably with sophisticated models which are highly tuned

for specific classes of queries, such as named-persons. Our experimental analysis

has also confirmed the proposed reranking method works well when there exist sufficient

recurrent visual patterns in the search results, as often the case in multi-source

news videos.

| The illustration of the proposed visual reranking process which tries to improve the visual documents (i.e., web documents, images, videos, etc.) from initial search results. This proposed approach explores the fact that often in image search there are multiple similar images spreading over different spots in the top pages of the initial text search results. The approach revises the search relevance scores to favor those images that occur multiple times with high visual similarity and have high initial text retrieval scores. |

People

Publication

Hsu, W.H.; Kennedy, L.S.; Shih-Fu Chang. Reranking Methods for Visual Search. In IEEE Multimedia, Volume 14, Issue 3, Page 14-22, 2007. (PDF)

Winston Hsu, Lyndon Kennedy, Shih-Fu Chang. Video Search Reranking via Information Bottleneck Principle. In ACM Multimedia, Santa Barbara, CA, USA, 2006. (PDF)

Winston Hsu, Shih-Fu Chang. Visual Cue Cluster Construction via Information Bottleneck Principle and Kernel Density Estimation. In International Conference on Content-Based Image and Video Retrieval (CIVR), Singapore, 2005. (PDF)

![]()

For problems or questions regarding

this web site contact The Web Master.

Last updated: January 10, 2007.